Visual Consistency in Ecommerce UX: The Right Photos Turn “No Difference” Into a 13% Increase in Proceeding to Checkout [Case Study]

A lot of AB testing and conversion rate optimization discussion is focused on adding or removing elements from the site. For example:

- Does free shipping messaging help or hurt? (Our case study on this)

- Should we add quick links on the homepage? (Our case study on this)

- Does an upsell after adding to cart help or hurt? (Our case study on this)

- Does a sticky add to cart element help or hurt? (Our case study on this)

When running and writing about those tests, what is often given secondary importance is the visual aesthetic of the new layout or designs being tested.

Even the way ecommerce teams, our clients, talk about these tests is mostly about the the existence of these elements or not: “So should we keep the carousel or not?” They don’t place as much value to the detail of the design of that element once it’s added.

What I mean is that:

- The font, placement, size, and colors of your free shipping messaging could affect whether it increases conversion rate or not.

- The photography, layout, and design details of how you propose the upsell could affect whether it increases conversion rate or not.

- Same for sticky add to cart.

These details are tedious to test. If you design something you think is good and the test shows no difference, what do you do? How do you even know if it’s that the whole hypothesis is proven false (e.g. maybe upsells don’t increase AOV for this site), or it’s just a design detail which, if you fixed, would change the outcome?

You don’t know. That’s the hard part.

And practically speaking, most AB test programs typically have a queue of other tests the team (and executives) are itching to launch. So you don’t have the luxury of trying 5 different design concepts for one hypothesis (Aside: We don’t use hypotheses, we ask questions instead).

But that doesn’t mean you shouldn’t recognize and keep in mind that the details of designs can affect the outcome of tests, sometimes heavily.

An increasing number of ecommerce AB tests we are running these days has led me to be reminded of this. And here I’ll profile one particularly telling example.

At the bottom of each category page (or “product listing page”) for an electronics ecommerce site, we wanted to test putting links to the other categories. There was one extremely dominant category on this site and we wanted to expose customers to complimentary products in other categories.

We had two design concepts drawn up to test whether these category links would increase sales of other products or affect conversion rate in any way. Functionally, they were identical, but one used product photos and the other used lifestyle photos. Here are some mockups using a camping site as an example (not our client).

Adding a “Discover More” section using product only photos:

Adding the same section using lifestyle photos:

Here is the key result: the product only photos increased proceed to checkouts by 13.5% with 97% statistical significance and increased transactions by 23% with 89% statistical significance while the lifestyle photos showed no statistically significant difference in any key metric. Specifically, proceed to checkouts and transactions did not get higher than 50% statistical significance in the lifestyle photo version — in other words, not even close to statistical significance.

(The test was run for 3 weeks with over 550 proceed to checkouts per variation over 20,000 sessions per variation).

What’s interesting is that both our team and the client’s design team preferred the lifestyle photos! We felt the existing pages were too full of “product on grey background” images and the lifestyle photos added interest and color to the page. We thought it helped the overall brand look and feel.

But if we had run only the lifestyle photos, we would have concluded this test made no difference and moved on. Instead we saw a sizable increase in visitors making it to the checkout page and an non-negligible increase in transactions (albeit with only 89% statistical significance) in the product only variation. So we can see that this idea will likely help conversion rate and sales on the site and is worth either implementing or at the very least exploring further.

(Note: A 13% increase in customers making it to checkout is substantial, and it’s very common to see a statistically significant increase in checkout pageviews before you see the same increase in actual transactions, so a 97% stat sig increase in proceed to checkout along with an 89% stat sig increase in transactions means you are on to something).

The difference was just a change in photos. This is something many teams would not test. We almost didn’t test it. But this difference mattered here. Our hypothesis of why is that the product photo version was visually consistent with the rest of the page. Although the lifestyle photo version looked more visually interesting, perhaps people were more likely to dismiss that section as ads (banner blindness) or just not give it the same attention as browsing the products above because it didn’t look like the products above.

Either way, the lessons are clear:

- When you’re testing “Does this thing help or hurt?” don’t forget that the details in how you design “this thing” may affect the outcome of the test.

- If you have the luxury of being able to test multiple variants of a concept, do it.

- If you can only test one variant, design multiple variants first and discuss as a group the pros and cons of each design, so you can hopefully settle on the version that gives you the best chance.

- When discussing the designs, don’t forget about visual consistency on a page. It’s not just about what you think looks the most clicks for that element, but also about how it fits with the rest of the page. Be careful about throwing in a design that is strikingly different form everything else. It doesn’t mean you never should do that, sometimes you want things to stand out, but think carefully about whether a visual inconsistency is intentional and will have your desired effect or not.

Sticky Add to Cart Button Example: Actual AB Test Results

In our work with dozens of ecommerce companies over the last 5 years, we’ve noticed that the design and UX of the add to cart section (buy buttons, options, quantity controls) is debated a lot.

- Should you make the add to cart button sticky?

- Should we make the entire section sticky?

- Should we hide some selections (size, color, flavor) or show all?

- Should we show all sizes as buttons or shove them in a dropdown?

- In what order should we present the different options?

This case study is about the first question.

In this article, we present results from a couple AB tests where we tested having the entire add to cart area be sticky versus not sticky on desktop devices for an ecommerce site in the supplement space.

The variation with the sticky add to cart button, which stayed fixed on the right side of the page as the user scrolls showed 7.9% more completed orders with 99% statistical significance. For ecommerce brands doing $10,000,000 and more in revenue from their desktop product page traffic, a 7.9% increase in orders would be worth $790,000 per year in extra revenue.

But, not all instances of making add to cart controls sticky have increased conversion rate, as we share below.

In our experience, sticky add to cart areas on desktop product pages are less common than on mobile, so this result suggests many ecommerce brands, marketing teams, and store owners may benefit from AB testing sticky add to cart buttons or entire add to cart areas being sticky on their product detail pages.

In general, this type of test is squarely in the “Usability” purpose of our Purpose Framework.

Usability tests are changes to UX, and typically what most people think of when they hear “AB testing”. But generally, they aren’t where you get consistent, long term increases in conversion rate, because most modern ecommerce websites already have good enough UX. Instead, we suggest: (1) tracking and plotting which purposes you’re testing more often and which are winning and (2) being more intentional about deciding which purposes are likely to move the needle for your customers and systematically focusing on them it.

We explain more in our Purpose Framework article linked to above for those that are curious. You can also see all ecommerce A/B tests we’ve ever done, organized by purpose, in our live database.

Note: If you’d like to run AB tests like this to help increase your ecommerce site’s conversion rate or improve user experience, you can learn about working with our ecommerce CRO agency here.

Building a Data Driven Culture: AB Tests Can Help Settle Endless Debates

The conference room: Where the sticky add to cart button is likely debated non-stop with little useful progress is made.

When you read about AB tests online, most articles have a nice predictable pattern: (a) We had a great hypothesis. (b) we tested it (c) it worked and got a conversion lift!

But when you do enough AB testing (we run hundreds of AB tests on ecommerce sites every year, which we transparently catalog here), you come to learn that most tests don’t end up so neat and clean.

So instead, we urge you to think of an alternative, but also valuable use case of AB testing:

Run a test to learn about your customers even when you could make arguments for either variation being “better” and there isn’t consensus on which one is “obviously going to win” (a phrase we often hear clients use).

Let’s use this article’s example to learn why this “ab tests for learning” use case is so useful: For this sticky add to cart button example, in the traditional design process, people in the company would debate in a conference room (or by email, or in Slack), about having a sticky vs. non-sticky add to cart area on their product page.

They’d go in circles fighting with each other about which is better, which is more on brand, which competitors do something similar, and on and on.

Then, either the higher ranking person wins or an executive steps in and gives their official decision like Caesar at the Colosseum (even though 99% of the time the executive is not a UX expert).

But in reality, neither side knows which will do better. Both arguments are legitimate. And the one important contingent that does not have a seat at the table is the customer. An AB test lets your customers give their input.

And, finally, whichever variation “wins” is important but not as important as the learnings about how your customer thinks, what they prefer, and what is more persuasive to them, all of which you could learn by simply running a test.

So, with all this on our minds, we ran this test.

The Variations: Fixed vs. Sticky Add to Cart Buttons

The variations were simple. One of them (A), the buy box was not sticky and in the other (B) it was sticky. (Aside: Read why we anonymize clients here.)

In this case, the client’s product page had a sticky buy box (variation B) to begin with, and it hadn’t yet been tested. The reason we decided to test this was because there was a content-focused culture around the brand, so we felt it was important to learn how much users want to be left alone to read content versus having a more in your face request to buy following them down the page.

One can make a theoretical argument for both variations:

- Argument for variation A (not sticky): You don’t want the site to act like a pushy salesperson, hovering over your shoulder when you’re just trying to read about the product and its benefits. It will turn people off.

- Argument for variation B (sticky): People can read about the product just fine, and reminding them of the ability to add to cart will increase the percentage of people that do so.

This is why, by the way, we use The Question Mentality and base A/B tests on a series of questions instead of old fashioned hypotheses where someone pretends they know what the outcome will be “Sticky is better! Trust me!”.

In this test there are really just two main questions to answer:

- Does making the add to cart area sticky affect add to cart rates?

- Does it affect conversion rate too? In other words if people add to cart more, do they actually check out more?

Results: Sticky Add to Cart Button Gets More Orders by 8%

In this test, the sticky add to cart variation showed 7.9% more orders from the product pages with 99% significance. The sticky version also showed an 8.6% increase in add to carts with 99%+ significance. The test ran for 14 days and recorded approximately 2,000 conversions (orders) per variation.

Referencing the arguments for either side above, this test gave the marketing department and us valuable information about the customer (and saved a potentially conversion hurting future change of undoing the sticky add to cart area).

Despite this brand’s heavy focus on content, despite the customers’ needs to read a lot about product uses, benefits, ingredients, and more, having an ever-present add to cart area seemed to be okay with the customer. It did not annoy the customers, and in fact seems to increase the percentage of them that decided purchase. This is a useful learning, despite this test largely being in the usability category, not desirability.

(Note: This is true of this store, it may not be true of yours. Hence we always suggest at the end of case studies that you should “consider testing” this. We don’t say you should just “do” this.)

This learning can actually be extended beyond the product pages to content pages such as blog posts where we can test being more aggressive with product links and placements to see if similar results can be achieved there.

This is why we love using AB testing for learning.

Update: Sticky Add to Cart Button on Mobile Product Pages Also Increased Conversion Rate

Since first writing this case study, we tested multiple UX treatments for a sticky add to cart button on the mobile PDP of this exact same supplement ecommerce store. The smaller screen real estate on mobile devices, of course, means finding CTAs like the add to cart button can be more difficult, so making the add to cart button sticky could improve user experience.

We tested three variations: (A) No sticky add to cart button (B) Sticky add to cart button that simply scrolls the user to the add to cart area on the product page (C) Sticky add to cart button that causes the add to cart area to slide up from the bottom of the page like a hidden drawer.

We observed a 5.2% increase in orders for variation C where the add to cart area slides up like a drawer, with 98% statistical significance. This test ran for 14 days and had over 3,000 conversion events (orders) per variation (so over 9,000 conversion events total).

Add to cart clicks increased by a whopping 11.8% on variation C (>99% statistical significance) and by 6% on variation B. So actual use of the button was substantially increased by making the add to cart button sticky on the mobile PDP.

Variation B — where clicking on the sticky add to cart button simply scrolls users to the add to cart area on the PDP — on the other hand showed no statistically significant difference in conversion rate from the original. As per an insightful reader comment below, this discrepancy between variation C showing a clear lift and variation B showing no difference could be explained by:

- The slide up “drawer” of add to cart functionality (choosing a flavor, quantity, etc.)in variation C may have kept users focused on that step because it feels like you’re in a new “sub-page” of sorts instead of just scrolling to another part of the PDP.

- Also that means on the PDP itself there was no space taken up by add to cart functionality in variation C like choosing flavors so users got to see more persuasive “content” about the product on the PDP.

This suggests that similar conversion gains can be realized on mobile product pages, but the details of how to implement them and the UI/UX that will cause a conversion increase are important. Questions? Let’s discuss in the comments.

If you have questions about whether your store should test sticky add to cart functionality, how to execute it (e.g. Shopify plugins vs. manual coding), or about working with us, you can ask us in the comments, send us an email, or learn about working with us.

Or join our email newsletter to get our latest articles and AB tests.

Our Foundational Ecommerce CRO Articles

- Our Purpose Framework

- Our Question Mentality

- Our live database of all A/B tests we’ve ever done

- Our collection of ecommerce UX breakdown videos

Other Single A/B Test Case Studies

E-commerce Free Shipping Case Study: How much can it increase conversion rate?

We know free shipping is a massive needle mover for ecommerce customers. In this short case study we share results from two AB tests we’ve done that help answer:

Where is the best place to put your free shipping and free returns messaging to get the biggest lift in conversions?

Test 1: Free Shipping messaging placement for furniture ecommerce site increases conversion rate 19%

Hypothesis:

On the original site, free shipping and free returns was already mentioned in the promo bar at the top of the page which was visible sitewide.

We hypothesized that due to (a) banner blindness and (b) too many competing messages in the promo bar, this message was not getting across.

Where could we place this message that would be least likely to be missed and most likely to influence the buying decision?

We settled on placing it below the add to cart button on the product detail pages (PDP).

Result

We saw a 19% increase in orders with 99.9% statistical significance. The test ran for 2 weeks and recorded over 1,500 conversions.

Implications

In many ways, this test is fascinating. In the original, the free shipping and free returns messaging is already mentioned in the promo bar, at the top of the page, sitewide.

How could customers not see this?

This result suggests there is truth to the idea that banner blindness and competing messaging hurts the effectiveness of that message.

If you offer free shipping and free returns, or have other key value propositions (like an active discount code or promotion) you should strongly consider testing where free shipping and returns messaging is placed, and certainly test adding it near your add to cart button on the PDP. Most brands from what we’ve seen either put them in promo bars (not bad) or save them for graphics on the homepage (much worse).

Test 2: Free Shipping copy for a Supplement Company Does Not Affect Conversion Rate

We tested something very similar for a niche supplement company.

Hypothesis

In this case, we actually hypothesized it would perform better because there was no mention of free shipping on the site except in fine print. (Definitely not in a sitewide promo bar like the example above).

Just like the above test, we put free shipping copy below the add to cart button:

The only differences were:

- The copy said “Free US Shipping & Returns instead of “Free Shipping & Free Returns”

- There was a dropdown caret that had more details on the 30 day return policy. The schematic above for B (our variation) shows the caret expanded. Upon pageload it was collapsed, i.e. the box with return details was not visible.

Results

After 2 weeks and over 5,000 conversions, we saw no difference in conversion rate between the original and variation. The conversion rates were almost identical!

Implications

For this brand we actually tried a few different placements of free shipping copy including in a promo bar and still found it made no difference on conversion rate.

Why could that be?

AB tests tell you what and you have to hypothesize as to why.

In this case it could be several reasons:

- This is a specific, niche supplement space where there are only a few providers and most provide free shipping, so it may be expected by the customer.

- This is a much lower price point than the first example (furniture) so perhaps in the first example the thought of a hefty shipping cost and hassle of returning furniture is a huge friction point that the copy helped assuage.

- The supplement brand is very content heavy, so readers may be far more sure they want to buy after reading up on the details and details like shipping cost don’t matter as much.

- Finally, the customers for the supplement brand may simply be less price sensitive due to its niche characteristic. In fact, later we did pricing tests that also showed little difference (to be profiled in a later case study).

Disclaimers

One lesson we’ve learned over and over is that while there are UX patterns that seem to perform better across multiple ecommerce sites, there are always plenty of exceptions. So what works for one site, doesn’t always work for another. The two examples above show that.

So we encourage you to learn, take inspiration, and think critically about the case studies above and how they may apply to your store. Then, we encourage you to run your own tests before simply implementing these UX treatments on your site.

If you’d like to talk to us about improving conversion rates for your ecommerce brand, you can learn more about working with us here.

Mobile Checkout Best Practices and Trends in Ecommerce

Our ecommerce conversion optimization team has done an extensive analysis of the mobile checkout experience of the top 40 ecommerce sites in the U.S. (by traffic, according to the Alexa.com Shopping category). In this article, we present the results and analyze the impact of this data on current mobile checkout best practices and mobile ecommerce trends.

This analysis includes:

- Key UX features in mobile checkout for each site (24 features total)

- The percentage of the top 40 sites that employ each feature

- When applicable, AB test data we have for each feature

- Our conversion optimization team’s recommendation for each feature

We’ve divided our analysis into 4 sections of mobile checkout:

Each section has between 4 and 8 features analyzed, with a total of 24 features discussed.

We go beyond just listing features to discussing which should be considered mobile checkout best practices and which can be ignored. For example, you’ll learn insights like:

- What percentage of these sites employ payment systems like Apple Pay

- What percentage have a save your cart feature?

- Does it matter if you add trust badges on shipping and how many sites do?

- Has our team AB tested these features and if so, what have we concluded?

- For 24 unique mobile checkout features

At the end, for reference, we’ve included screenshots of the mobile checkout flows of each of the top 40 sites we analyzed.

[su_note] Bonus: No time? Get a concise PDF summary of the data and our recommendation for each feature. [/su_note]

You can find details of our methodology, including the full list of the top 40 sites and the bottom of this page.

Finally, if you’d like to apply to work with us to increase your ecommerce conversion rates via AB testing, you can do so here.

Why Mobile Checkout Best Practices Are So Critical

Mobile checkout is arguably the most important ecommerce conversion optimization trend today.

Why?

Because most ecommerce stores have more mobile traffic than desktop, and it’s only going to get worse.

But mobile conversion rates are much lower than desktop. We typically see mobile conversion rates hover around half of desktop.

Brands with the best mobile checkout experiences will have a massive advantage over competitors for years to come. It’s our hope that this study will help your ecommerce site improve its mobile conversion rate through an improved checkout experience.

Mobile Shopping Cart Page Trends

Cart features we analyzed:

- Add to Cart Button Takes User To?

- Number of Upsells in the Cart

- Checkout Button Above the Fold

- Proceed to Checkout Button is Called?

- Displays Secure Checkout or Trust Badges

- Total Savings Highlighted in a Separate Line Item?

- Save Cart for Later Option?

- Keep Shopping Link?

Add to Cart Button Takes User To?

Designers love to talk about “minimizing clicks”. So in ecommerce companies, deciding what happens when the user clicks “add to cart” can be a source of debate:

Should you take them straight to the cart? This minimizes clicks if they will only check out with one item, but increases it if they will add multiple. For example Etsy.com, BestBuy.com, and Wiley.com all do this.

Should you use a temporary notification? (Appears, says they added to cart, then disappears) This bothers the user the least but may not be “in your face” enough to encourage checking out. Nike.com, HM.com, and Macys.com all do this. In general “sliders” or “drawers”, even on desktop checkout flows seem to be an ecommerce checkout design trend in the last few years.

Should you use a permanent notification that pops up or slides up? This is very clear, but may create more clicks for the user. Here is Walmart’s:

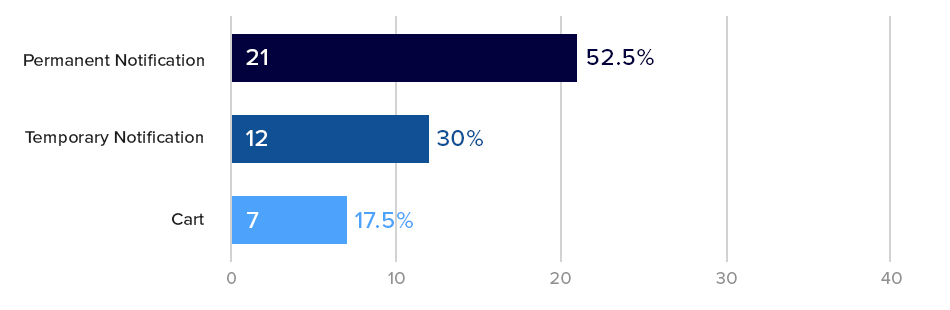

The majority (over 50%) of our Top 40 sites used a permanent notification. These are very similar to taking the user to cart, because they have an option to proceed to checkout immediately or review cart or continue shopping.

The key difference is a permanent notification can often be closed (with an “X” button) and the user remains on the product page without waiting for page loads, so the experience is faster then sending them to the cart.

What is Best Practice for Add to Cart Notifications? Our AB Tests Show…

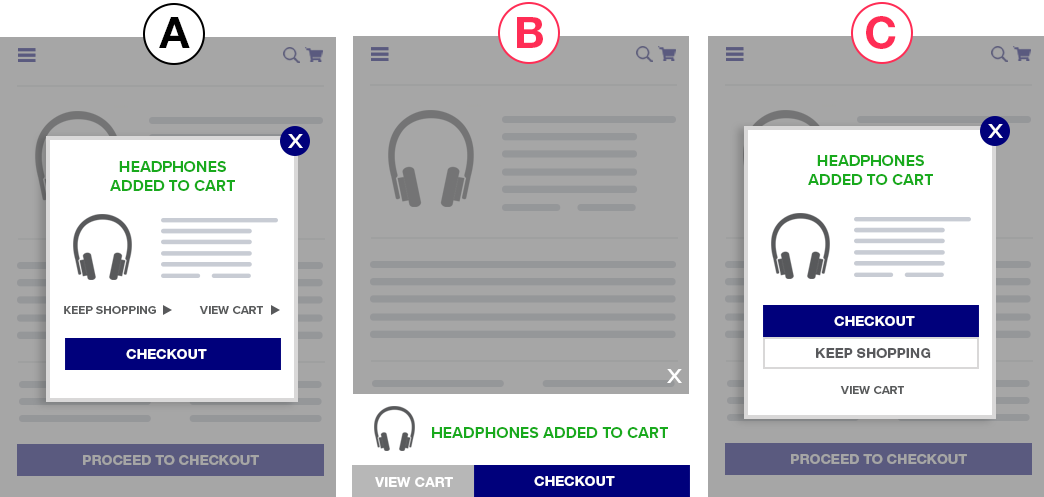

We’ve tested different add to cart notifications before and haven’t seen large, impactful changes in conversion rate (on both mobile and desktop).

For example in one mobile specific test, we tried different styles of a permanent notification with differing amounts of information and size.

We saw clear differences in how many users clicked Proceed to Checkout vs. View Cart buttons (10% – 12%) with statistical significance but saw no net change in orders or revenue.

This suggests that the format of the add to cart notification may not make a huge impact in actual mobile conversion rates. As per our usability vs. desirability optimization framework, changes that affect a users desire to checkout (desirability) usually have a bigger impact than reducing friction (usability). This is a usability tweak so it’s unsurprising that it didn’t make a huge impact.

Growth Rock Recommendation: For high traffic or high transaction volume sites, this may be worth testing, but don’t hold your breath for large changes in mobile conversion rates (> 5%).

Number of Upsells in the Shopping Cart

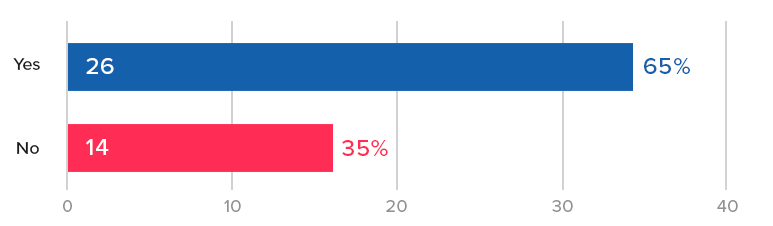

65% of our top 40 ecommerce sites have upsells and cross sells on the cart page. Surprisingly 14 of the 40 sites we analyzed did not have any upsells or cross-sells on the cart.

On average, those that had shopping cart upsells, had 14 products recommended somewhere in the cart.

That’s a lot of products!

Typical mobile shopping cart upsell designs looked like this one, from Sears:

Upsells and cross sells are a huge factor for any ecommerce website because of their power to increase average order value (AOV).

What is the Mobile Shopping Cart Upsell Best Practice? Our AB Tests Show…

In our AB tests, we’ve seen upsells and cross-sells improve AOV significantly, but they are not always guaranteed to be effective.

Thus we strongly recommend every ecommerce site test this for themselves. Test different upsells, test including and not including upsells, and test how and where the upsells are presented.

In multiple tests, upsells have made no difference on AOV and revenue per visitor. As the site management team or owner, you want to know this so you can test alternative products, number of products, position, copy, etc.

On the other hand for those sites that don’t have upsells or cross-sells, they should for sure be tested as they have the potential of increasing our AOV and (most importantly) revenue per visitor, significantly.

None of our tests have yet to show a decrease in conversion rate due to the presence of upsells or cross-sells in the cart.

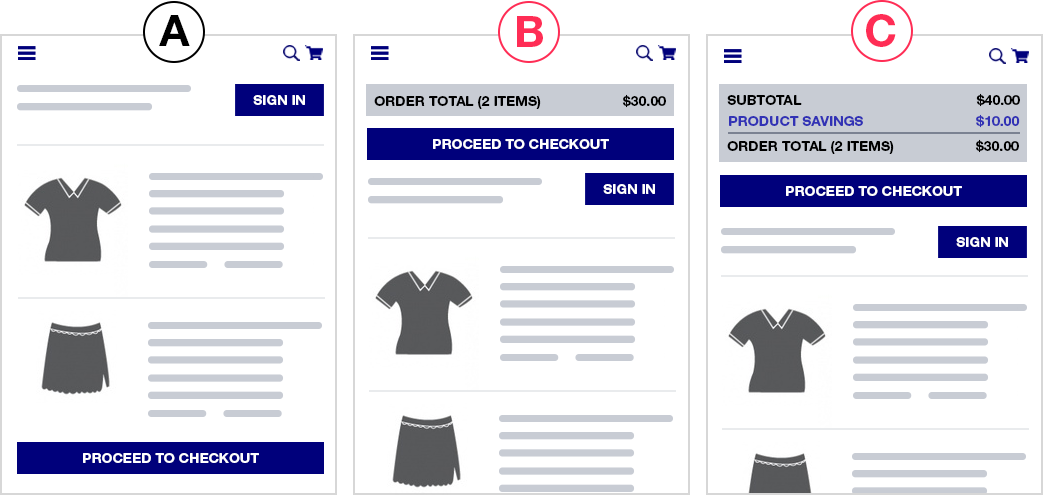

Checkout button is above the fold?

60% (24) of the top 40 sites had their checkout button above the fold on their mobile cart page.

A pretty well accepted ecommerce mobile checkout best practice is to put checkout buttons above the fold. You hear UX experts recommend this all the time.

But again, this is a usability tweak — where the checkout button is doesn’t affect a users desire to checkout. So should this be a best practice? What does the AB testing data say?

What is the Checkout Button Position Best Practice? Our AB Tests Show…

Because this is a usability tweak, we have only tested this once.

In that test, we did not see a statistically significant change in checkout rate by adding a proceed to checkout button above the fold, contrary to what the well accepted best practice would tell you. In a second variation where we added detailed savings and order total amounts in addition to the button, we actually saw a trend towards a 2% decrease in conversion rate (albeit with only 84% significance).

Growth Rock Recommendation: You can test this as your results may vary (very few UX trends apply to every site) but we’d suggest focusing efforts on bigger potential wins.

Ask what’s actually holding customers back from checking out? Chances are it’s not that they can’t find the checkout button.

Proceed to Checkout Button is Called?

We love to make jokes about people who think AB testing is about button colors and button text and other tiny details.

The vast majority of the time, details like this make no difference.

However, if you’re curious about the proceed to checkout button copy, above is what we found in our top 50 ecommerce sites. Most of the top 40 stores simply called their button “Checkout”.

Growth Rock Recommendation: Again, as per our Usability vs. Desirability framework, we don’t recommend spending too much time on small UX decisions like this.

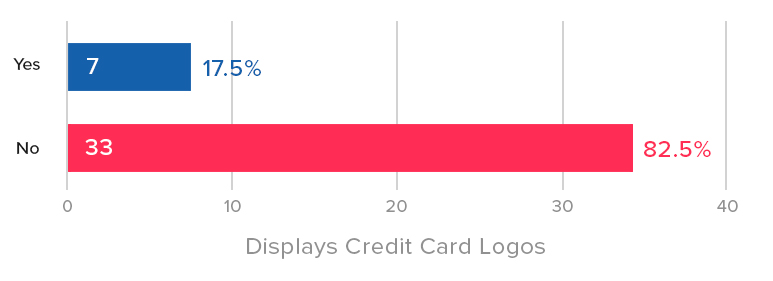

Displays Secure Checkout or Trust Seals

Credibility icons and social proof is, for sure, an ecommerce design trend these days (e.g. 2017, 2018, and 2019). It is one of the most oft-mentioned tactics in the conversion optimization community.

But it’s interesting that less than half (42.5%) of our Top 40 sites had a security message or trust seal on their mobile cart page. Even fewer (only 17.5%) had credit card logos. It’s arguable whether credit card logos are necessary today as consumers now expect all sites to take all major credit cards.

Advertising a “secure checkout” experience on the other hand is more controversial. As we indicate in the payment section, our AB tests have not shown a lift in conversion by mentioned security, or using security badges, trust seals or icons.

It’s possible that at least some of the 57.5% of sites we analyzed that also don’t mention secure checkout or have trust seals on the cart page have also tested this and found it didn’t make much of a difference.

What are Trust Seal Best Practices on the Shopping Cart? Our AB Tests Show…

Of course social proof is a well-known persuasion tactic and we agree with its usage.

That said, we have not seen credibility icons or social proof quotes make a huge difference in most AB tests, in particular in the checkout flow.

As mentioned below, our few tests of credit card and security trust logos on the payment step have not shown a conversion lift.

Growth Rock Recommendation: This is an easy test to run and we suggest you try it as many have reported data suggesting trust seals and security messages improve conversion rate. Just don’t hold your breath for a conversion increase. If you have a trust seal or security message on your site currently, it could be worth testing removing it. In our view, if something is not helping, its best to remove it to keep experiences as clean and distraction free as possible.

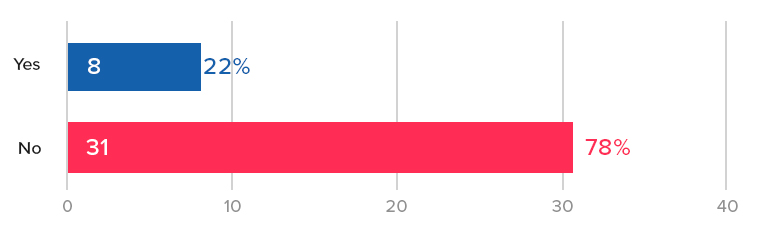

Total Savings Highlighted in a Separate Line Item?

Savings (or, more specifically, perceived savings) are a huge factor in for ecommerce stores. We’ve seen evidence of this for many different ecommerce brands: low price (AOV < $30), luxury apparel (AOV > $300), furniture (AOV > $1000), to name a few. User surveys and customer support interviews in luxury apparel even indicate that customers love feeling like they got a deal or “the best price” (even on a $1000 purse).

But only 35% of the Top 40 sites we analyzed have savings highlighted as a separate line item in their order total in the shopping cart:

So what is the best practice for how a store should highlight savings on the shopping cart page?

Our AB Tests Show…

We’ve observed something interesting in savings highlighting on the cart page: Highlighting savings at the product level seems to be significantly more important than highlighting it at the order total level.

In one store, we saw a 4% increase in revenue per visitors and 3% increase in checkouts when we highlighted savings on each product, vs no statistically significant increase in either metric when we only highlighted it at the order total level.

Save Cart for Later Option?

Cart abandonment is such an issue for ecommerce teams that even writing this sentence feels like a cliche.

But on mobile? It’s even worse. Mobile users are notorious for adding to cart, and dropping off. So features like this that can capture their email — as long as it doesn’t hurt checkouts, can be really impactful.

Here is an example from Nordstrom:

We’ve tested a save your cart feature on many ecommerce stores and the results have almost always been positive.

Most sites already have this ability built-in, if a user is logged in. So the easiest way to test this is to include a button or link in the cart page that says “Save Your Cart for Later” followed by “by creating a free account”.

In one of our tests, we saw a whopping 250% increase in account creations by adding this link. Why so big? Because most ecommerce sites have dismal account creation rate other than people who already buy. (When is the last time you decided to create an account when buying clothing online for example?)

So adding this incentive (save your cart) and clear CTA on a very high traffic page (cart) increases account creations dramatically.

But does this hurt checkouts?

This can often be a concern as you’re adding a secondary CTA on the cart page that could distract. Our save your cart tests haven’t shown a drop in checkouts. If anything both showed slight increases in checkouts.

Growth Rock Recommendation: All ecommerce sites should test adding save your cart functionality.

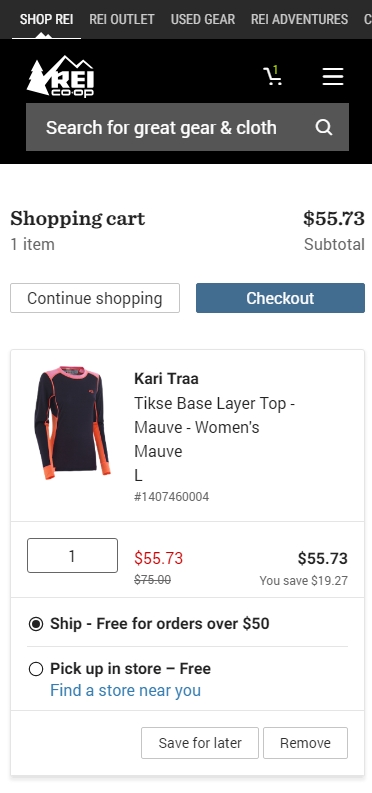

Keep Shopping Link?

Keeping distractions to a minimum is a key conversion principle across all website CRO. You want to keep users focused on their primary desired actions.

Thus, our team feels that a “Keep Shopping” or “Continue Shopping” link is not useful on the cart page. Users can already continue shopping in many ways.

- Back button

- Logo to go to the homepage

- The full navigation menu, which is present on almost all cart pages

Instead, we feel it can serve to distract from the primary CTA of proceeding to checkout.

Our AB tests show…

In fact, in one of our “save your cart” AB tests involved replacing the “continue shopping” link with “save your cart” and saw orders trending positive by 6% vs. original, albeit with only 83% statistical significance.

We hypothesized that removing the prominent “continue shopping” link in that instance may have been the true cause of the slight potential conversion rate increase rather than the save your cart link in that test.

About half of the top sites we surveyed had a continue shopping link. Notable sites without such a link in the cart include two of the biggest ecommerce sites today: Amazon, and Walmart.

Notably, for larger ecommerce stores like those, search is a major feature, and since normal nav elements remain on the cart, the search bar is, in effect, another “continue shopping” option for the user, rendering the “continue shopping” button less useful.

This redundancy is made starkly clear on Rei mobile cart page:

Growth Rock recommendation: Test removing your continue shopping link. In fact, consider replacing it with a “save your cart” link from the item above.

Pre-Checkout

Jump to:

- Guest Checkout Option?

- Separate Page to Choose Guest Checkout?

- Continue with Social Media Account Option?

- How Many Pages is the Mobile Checkout Flow?

Guest Checkout Option?

The vast majority (75%) of the sites we investigated have a guest checkout option. It’s well regarded at this point as a conversion “killer” to not have a guest checkout option.

These brands in our list do not have guest checkout and require account creation to checkout:

- Amazon.com

- Zappos.com (owned by Amazon)

- 6pm.com (owned by Amazon)

- Costco.com

- Target.com

- Wayfair.com

What do you notice? The majority are huge brands with household brand names in the U.S.

Half are Amazon or owned by them. Amazon, of course, is built on registrations which feed it’s business model including growing Prime subscribers.

Costco.com won’t even let you checkout of their physical retail store without being a member so that goes without saying.

So the only two unexpected brands on this list are Target and Wayfair. They are both big brands, one in brick and mortar retail, and the other online, but other than size and brand recognition, nothing in their brand ethos would suggest it’s an obvious move to require sign in. Thus, it’s an interesting decision by them to demand users create an account.

Notably, other household brand names as big or bigger than Target and Wayfair allow guest checkout. In particular, Walmart, Home Depot, and Ebay (which historically started out as sign up required to bid on items and only later allowed guest checkout.

Growth Rock Recommendation: Unless you have the brand recognition and size of Target and Wayfair, you should probably stick to allowing guest checkout. At the very least AB test removing it and calculate whether the drop in immediate purchases is made up for

Separate Page to Choose Guest Checkout?

The majority of top ecommerce sites (56%) still send users to a separate page prior to the start of the checkout flow to choose whether to use guest checkout or create an account.

Once again, traditional UX theory suggests “minimizing clicks” should help conversion rate.

Our AB Tests Show…

We’ve seen indications of a conversion lift by removing this page but nothing particularly convincing. The closest was a test run to 880,000 visitors, where, where removing this page (and sending customers straight to the checkout page) showed an improvement in a conversion rate of 1.5% – 2%, which held steady over multiple weeks but ended with only 86% statistical significance.

Growth Rock Recommendation: We suggest you test this yourself if you have the resources to run at least 1 – 2 test a week. If your AB testing bandwidth is limited, focus on bigger wins. If you run this, pay attention to a potential tradeoff between new account creations and completed orders.

Aside: 84% of these pages present guest checkout second to sign in for returning users. While we haven’t tested this (if removing this page entirely makes only a small difference, optimizing this page doesn’t seem like a good use of time), we find it interesting that almost all brands visually prioritize returning user sign in over guest checkout when it’s widely accepted that guest checkout is necessary because the majority of checkouts are from non-registered customers.

Second, on mobile, 36% of the sites don’t even have the guest checkout option above fold (on iPhone 8). This seems like an easy UX fix to make to improve mobile conversion rates.

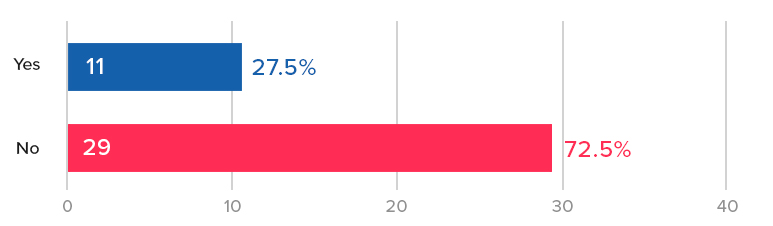

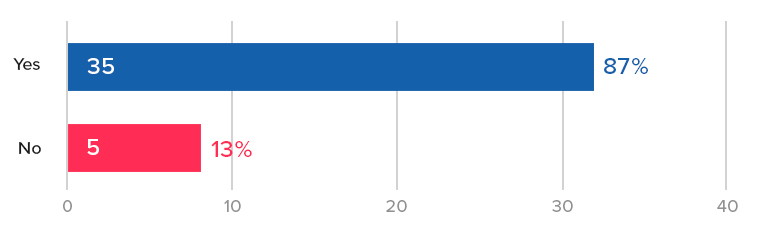

Continue with Social Media Account Option?

Poor mobile checkout rates are the massive elephant in the room problem for ecommerce stores. Most crossed over from “majority desktop” to “majority mobile” traffic sometime in the past 3 years. But mobile conversion rates are abysmal compared to desktop.

One big reason for that filling in a bunch of forms on your phone still stinks. Consumers just don’t want to do it. So we’ve seen time and again AB tests that improve mobile Add to Cart rates but barely move the needle on completed mobile orders.

Continue with Facebook, Continue with Google, etc. help the users out by using your address known from that social platform. You no longer have to fill all that in.

We found only 27.5% of the sites we examined have social options for checkout. We’re curious to see if that increases with time.

Growth Rock Recommendation: Test adding continue with social buttons as an alternative to normal checkout.

How Many Pages is the Mobile Checkout Flow?

Mobile users have less patience for page loads, so the conventional wisdom is to minimize the need for page loads, which slow them down and inevitably cause some fraction to bounce at each page load.

The average (and median) number of pages in the checkout flow of the sites we examined was right around 4.

The highest was REI.com at a whopping 7 pages of checkout (6 plus one for choosing guest checkout)

Each of their pages are small and easy, asking for just one thing at a time.

On the other hand, HomeDepot.com has only 2 pages (assuming you don’t checkout with an appliance that needs insurance coverage, etc.): (1) guest or sign in (2) the entire checkout form on one page.

The counter-argument to reducing checkout pages is that long forms on one page are intimidating and may scare away the user. The Home Depot checkout page looks intimidating as a long screenshot but on your phone you’re only seeing one part at a time.

Growth Rock recommendation: We’ve heard both sides of this debate in web UX in general, not just ecommerce checkout. We suggest you test this for yourself.

Mobile Shipping Page Trends and Best Practices

Jump to:

- Is There Some Form of Address Detection?

- Do They Have Instant Form Field Validation?

- Do the Number Fields Use Number Keypads?

- Is Site Navigation Hidden on Checkout Pages?

- Estimated Delivery Date Shown?

Is There Some Form of Address Detection?

One of the main reasons for low mobile checkout rates is how tedious it is to fill in forms on mobile.

One way to counter that is with address detection.

55% of the sites we examined had some form of address detection, most of which work like this:

Our team has only tested address detection once in the past few years and we did not see much of a change in conversion rate. It’s worth testing and not hard to implement. Google Maps API for example lets you easily add autocompletion with your form.

Growth Rock Recommendation: It could be worth testing address detection. Form filling on mobile is a known pain point. In our opinion, this should be a mobile checkout best practice because it simply makes form filling easier on the user. Nonetheless it has yet to catch on as an ecommerce trend (mobile or not) and thus the vast majority of ecommerce sites don’t yet have this feature.

Do They Have Instant Form Field Validation?

Continuing on the theme of making form fills as easy as possible, nothing is more annoying to mobile users than filling out a long form, clicking submit, then figuring out there’s an error and hunting around for it.

Yet, 27.5% of our top 40 sites had this poor UX!

Including

- Lowes

- Wiley

- Bhphotovideo

- Zappos

- Gap

- Forever21

- Gamestop

- Sephora

- REI

The solution is instant form field validation.

Here is the contrast, on Nordstroms, if I enter “1” in zip code and try to move on, it immediately tells me this is not a valid zip code. I fix it right there.

Whereas on Lowes.com, one of the culprits, I can enter “1” in zip code and be on my way and won’t know until I click “use this address”.

Growth Rock Recommendation: We haven’t done AB tests on instant form validation, but we don’t see a reason not to have it. Again, we think this should for sure be a mobile checkout best practice (really on all device types and sizes). Asking users to wait until they submit a form to see errors is just cumbersome. We recommend AB testing this (instead of implementing it outright) so you can see if any unintended consequences of instant validation may hurt your conversion rate. For example, some forms may be too quick to point out errors, causing users who are in the middle of typing an email address or phone number to see a red warning when they simply haven’t finished. If this hurts conversion rate, an AB test will indicate that for you so you can fix the problem.

Do the Number Fields Use Number Keypads?

Form filling on mobile is a pain because typing is a pain. One way to help is to make sure fields that only require numbers (phone, zip) use the phone’s number keypad with larger, fewer buttons than the regular keypad.

This is also in the category of obvious UX improvements. Yet 12.5% violated this rule including:

- Walmart

- Bodybuilding.com

- Gamestop

- Wayfair

- 6pm

For example here is J.Crew. When you click into zip, it immediately gives you a numbered keypad:

In contrast, here is H&M when you click into zip:

The UX is unnecessarily cumbersome. You have to click the number button on the left and then use the tiny number keys at the top of that keyboard.

Growth Rock Recommendation: You don’t need to AB test this, you can just implement this outright. There is no reason why a full keyboard is necessary for a number only field.

Is Site Navigation Hidden on Checkout Pages?

Distractions are the enemy of conversion rates. On that accord, it’s become commonplace to remove normal site navigation on checkout — on mobile and desktop.

On mobile, this means removing the hamburger menu and other icons in the navbar and often also unlinking the brand logo.

To let customer go back, there’s usually just one small link included. H&M has a great example of this:

However, several sites (27.5%) of our top 40 violated this rule and had full navigation available in checkout. Specifically:

- Amazon – As with many things, Amazon is maybe a unique case because the role the site plays in their customers lives is very different than other sites.

- Nordstrom – They have a hamburger menu present, although it’s content is drastically reduced during checkout

- Lowes

- Kohl’s

- Forever21

- RedBubble

- J.Crew

Lowes provides a very busy example of this:

Growth Rock Recommendation: We don’t see a need for sites that don’t have navigation to test adding it back in, however for sites on the list above that do, we strongly suggest AB testing a distraction-free alternative like H&M.

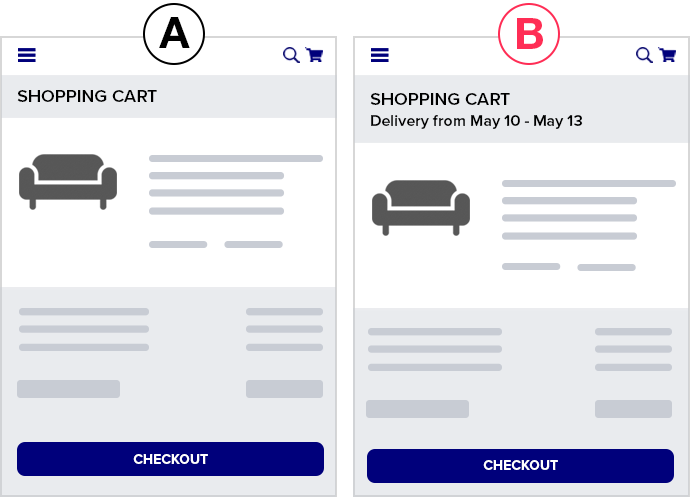

Estimated Delivery Date Shown?

The conversion motivated reasons for showing delivery date is two-fold:

- Simply answer a potential question in the customer’s mind. They may need it by a certain date or just be curious.

- Increase desire by making the purchase feel more imminent or real. If they think “I could have this by Monday” they may be more inclined to purchase.

65% of our top 40 sites had some indication of an estimated delivery or ship date, suggesting this has not widely caught on or been accepted yet.

We’ve tested adding estimated dates in the mobile checkout flow and have not seen any statistically significant lifts in conversion rate.

A caveat on the above result is that for that client, the estimated shipping and delivery date for each product is already on the PDP, therefore showing it again in checkout may not have added any additional motivation to complete the purchase (there was no question about this in the customers’ minds).

Mobile Ecommerce Payment Page Trends and Best Practices

Jump to:

- PayPal, Apple Pay, Amazon Pay Options?

- Does the Site Auto Detect Credit Card Type?

- Are There Trust Symbols on the Payment Page?

- What is the Final Payment Submit Button Called?

- Does Final Payment Submit Button Appear Above the Fold?

- Is There a Final Submission Confirmation or Review Page?

- Newsletter Opt-In or Opt-Out Option?

PayPal, Apple Pay, Amazon Pay Options?

This is a category to keep your eyes on closely. This could be the game-changing trend in mobile ecommerce over the next few years.

The entire mobile checkout experience including laborious form fills can be almost entirely skipped with an instant payment option like PayPal, Apple Pay, or Amazon Pay.

Look at how easy it is to checkout with Apple Pay on Kohls:

No need to fill in address. No need to fill in credit card. One click on the Apple Pay button and with my fingerprint I’ve paid for my Grinch who stole Christmas pajama pants.

Although this is the first year Growth Rock has published this study, in our own practice we’ve noticed a sharp rise in brands with Apple Pay, for example.

Growth Rock Recommendation: We are actively testing these payment options across multiple clients and strongly suggest you do the same. These instant payment options like Apple Pay should be a growing mobile ecommerce trend and in our mind should be regarded as a mobile checkout best practice (if they aren’t already).

Does the Site Auto Detect Credit Card Type?

In the category of unnecessary UX friction we have: asking the user to select Visa, Mastercard, Discover, Amex, etc.

You can detect it as they type their number as the abundant discussion when you Google “detect credit card type” would suggest.

Here is a nice StackOverflow discussion with a good summary of the ins and outs of this.

Only 10% of our Top 40 sites did not auto detect credit card type, but even that was surprising.

The culprits were:

- Wiley

- Macys

- Lowes

- Costco

- Gamestop

Growth Rock Recommendation: We have not tested this because this falls in the category of smaller usability tweaks that may very well help but often don’t “make the cut” at any given time for resources to devote AB testing to. For higher traffic and revenue sites it could very well be worth testing.

Are There Trust Symbols on the Payment Page?

One of the most common CRO “best practices” is to use trust symbols or badges, like these:

They can range from a full BBB or Norton badge to a tiny lock icon like HM.com:

Can’t find it? Exactly, it’s small.

But only 37.5% of our top 40 mobile sites had a trust symbol on the final payment page.

Is that bad? Maybe not. In a few tests we’ve done on trust symbols on checkout pages (not mobile only), we’ve seen largely no significant improvement in conversion rate by including the badges.

Our AB tests show…

Here are 2 AB tests that did not show an increase in conversion rate via trust badges.

In one test we added

- a Geotrust security badge

- a lock icon with SSL encryption copy

- credit card logos

…and saw no change in conversion rate.

This was for a site with the largest two age groups in Google Analytics demographic report being 55 – 64 and 65+ (thus, exactly the demographic you’d expect would need security badges).

In a second test, for a brand where the two largest age buckets were 25 – 34 and 35 – 44, we tested the inclusion of the following on the cart page:

- McAfee Secured badge

- Norton Secured badge

- Lock icon with “Shop with Confidence”

- A few store specific guarantees such as 20 year warranty and made in the USA

We saw no statistically significant difference in conversion rate. We tested multiple variations and the one with none of the above badges performed the worst during the test period, but the reduction in conversion rate from original was only 3.2% and statistical significance was only 68%. In other words, no statistically significant difference.

Growth Rock Recommendation: Do you need badges? Maybe not. Many brands on our list did not have them. They are very easy to test, so we suggest doing so. If for no other reason than to quell the debate about them in the office.

What is the Final Payment Submit Button Called?

Once again, we don’t think the name of this button is likely to matter, but it can be fun to see what competitors are using it, so here’s our histogram:

Growth Rock Recommendation: Pick something and save your mental energy for other things.

Does Final Payment Submit Button Appear Above the Fold?

Another CRO “truism” is placing things above the fold. We’ve seen this work well in many contexts. Mobile checkout is not one of them.

Our AB Tests Show…

In our tests, consumers don’t seem to care where the order or proceed to checkout buttons are, when they are ready, they know where to find them.

In our top 40 sites, slightly more than half (57.5%) did not have their final payment button above the fold.

Is There a Final Submission Confirmation or Review Page?

We find the final “review” or “confirmation” page an interesting discussion point.

Is it necessary?

Can the customer not review on the payment page?

Is it worth the extra page load and moment of pause?

The main arguments for this page are:

- To make sure there is no ambiguity for the customer before submitting their order

- Reduce customer service headaches post purchase if there are mistakes (e.g. wrong address)

- If you give them a chance to review, it will reduce errors on submission thereby increasing conversion rate

The counter-argument is of course that they may just be able to review on the final payment page itself and you don’t need to subject them to an additional page load.

We have not tested this but we think it’s interesting that there is very close to a 50/50 split in the top 40 sites.

Growth Rock Recommendation: This could very well be worth testing.

Newsletter Opt-In or Opt-Out Option?

Who likes email marketing more than ecommerce companies? No one. Well maybe email marketing software companies, but I digress.

55% of our Top 40 sites included an option to join a newsletter during checkout (almost always in the final payment step).

We did not test this (because we didn’t actually buy from all 40 sites) but it’s more than likely that 100% of the sites would put you on a newsletter after purchase even if they did not have a newsletter opt-in.

So it’s interesting to see that around 45% choose not to even give the customer a chance to “uncheck” the newsletter box and opt-out.

Several of these “NO” sites force you to sign up (Amazon, Zappos, Target) but many have a guest checkout option.

For example here is Gap’s final submit page, there was no box to join their newsletter the entire time. You bet Gap will start sending me emails the moment I order.

All 40 Top Ecommerce Site Mobile Checkout Flows

Our Methodology

How did you decide the top 40 ecommerce sites were?

There are many lists ranking the top ecommerce retailers in the US and globally.

Many claim to have information on sales volume, but this is questionable as many of the brands don’t release it publically, so it has to be inferred.

We felt the easiest way around this issue is simply to use Alexa.com’s top sites list for the category shopping. We started with 50 and removed 10 that weren’t really “ecommerce” from a traditional UX perspective, or duplicates.

So for example Netflix is #2 on Alexa’s shopping category, obviously analysis of their mobile checkout flow is not particularly useful for typical physical product ecommerce retailers, so we excluded them.

Also amazon.co.uk is largely a duplicate (from a UX perspective) of Amazon, so that was excluded.

The final list of 40 we used are below with screenshots of their mobile checkout flows.

Top 40 eCommerce Mobile Checkout Flows

[su_row]

[su_column size=”1/3″]#1 Amazon[metaslider id=”2045″][/su_column]

[su_column size=”1/3″]#2 eBay[metaslider id=”2066″][/su_column]

[su_column size=”1/3″]#3 etsy[metaslider id=”2084″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#4 Walmart[metaslider id=”2110″][/su_column]

[su_column size=”1/3″]#5 Ikea[metaslider id=”2100″][/su_column]

[su_column size=”1/3″]#6 BestBuy[metaslider id=”2121″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#7 Home Depot[metaslider id=”2148″][/su_column]

[su_column size=”1/3″]#8 Target[metaslider id=”2166″][/su_column]

[su_column size=”1/3″]#9 Nike[metaslider id=”2181″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#10 Wiley[metaslider id=”2227″][/su_column]

[su_column size=”1/3″]#11 Cambridge[metaslider id=”2234″][/su_column]

[su_column size=”1/3″]#12 H&M[metaslider id=”2248″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#13 Humble Bundle[metaslider id=”2262″][/su_column]

[su_column size=”1/3″]#14 Newegg[metaslider id=”2277″][/su_column]

[su_column size=”1/3″]#15 Body Building[metaslider id=”2289″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#16 Nordstrom[metaslider id=”2317″][/su_column]

[su_column size=”1/3″]#17 Macy’s[metaslider id=”2331″][/su_column]

[su_column size=”1/3″]#18 Lowes[metaslider id=”2344″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#19 B&H[metaslider id=”2364″][/su_column]

[su_column size=”1/3″]#20 Zappos[metaslider id=”2383″][/su_column]

[su_column size=”1/3″]#21 Costco[metaslider id=”2409″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#22 Kohl’s[metaslider id=”2425″][/su_column]

[su_column size=”1/3″]#23 Gap[metaslider id=”2442″][/su_column]

[su_column size=”1/3″]#24 Urban Outfitters[metaslider id=”2458″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#25 Wayfair[metaslider id=”2473″][/su_column]

[su_column size=”1/3″]#26 Forever21[metaslider id=”2487″][/su_column]

[su_column size=”1/3″]#27 Gamestop[metaslider id=”2503″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#28 Sephora[metaslider id=”2517″][/su_column]

[su_column size=”1/3″]#29 Overstock[metaslider id=”2531″][/su_column]

[su_column size=”1/3″]#30 REI[metaslider id=”2544″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#31 Redbubble[metaslider id=”2580″][/su_column]

[su_column size=”1/3″]#32 6pm[metaslider id=”2595″][/su_column]

[su_column size=”1/3″]#33 Staples[metaslider id=”2610″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#34 iherb[metaslider id=”2619″][/su_column]

[su_column size=”1/3″]#35 Walgreens[metaslider id=”2635″][/su_column]

[su_column size=”1/3″]#36 JCPenney[metaslider id=”2648″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#37 Sears[metaslider id=”2659″][/su_column]

[su_column size=”1/3″]#38 Barnes & Noble[metaslider id=”2677″][/su_column]

[su_column size=”1/3″]#39 Adidas[metaslider id=”2695″][/su_column]

[/su_row]

[su_row]

[su_column size=”1/3″]#40 JCrew[metaslider id=”2708″][/su_column]

[/su_row]

The Link Bar, an Ecommerce Mobile Homepage Navigation Alternative (to the Hamburger Menu)

Recent AB tests we’ve done suggest that many ecommerce sites could see an increase in mobile conversion rate by adding a “bar” of navigation links at the top of their mobile homepage, instead of relying solely on the hamburger menu.

We’re calling this a “Top Nav Link Bar”, or just “Link Bar”.

The Link Bar is an alternative to the much hated “Hamburger Menu”, which hides links behind the famous 3 bars (the hamburger). It’s hated enough to where simply Googling “hamburger menu” returns anti-hamburger menu articles in the top 5 results!

In this article, we’ll discuss the Link Bar concept via two AB test case studies where we saw increases in visits to product pages and purchase conversion rate.

Finally, we’ll also show a set of design examples from popular ecommerce sites that implement a Link Bar concept in different ways.

Our hypothesis is that the Link Bar lets shoppers get to the product pages faster by exposing product and category page links normally hidden behind the hamburger menu. One less click is required and the links are more prominent, so it increases the chances of users proceeding “down funnel” and seeing products.

Let’s get to the two case studies.

Note: We can also analyze your mobile ecommerce store user experience. Learn more about what we do here, or join our email list to get new articles like this one emailed to you here.

Mobile navigation Link Bar increase orders by 5% for an apparel store with 1000 products

First we have an apparel client that has over 1000 products across 9 categories (and multiple subcategories on their site).

So, pretty stereotypical ecommerce company.

What did the mobile homepage look like?

Since we anonymize clients, let’s use the mobile homepage of a well known brand that had a similar layout: Urban Outfitters.

Key characteristics of this mobile homepage (that were true of our client’s mobile homepage):

- Large image based full bleed photos that change depending on the current marketing campaign (about once a month)

- Main navigation hidden inside the hamburger menu

- If you scroll down far enough there are eventually links to categories

Here’s what we tested:

In the variation, we simply added the Link Bar, to the homepage only. There were 9 categories.

Note we didn’t replace the hamburger menu, it’s still there and still is the most thorough way to navigate the different product categories.

But it’s no longer the easiest way — the Link Bar is.

The Link Bar was left-right scrollable and had arrows to help indicate that.

Here are the results.

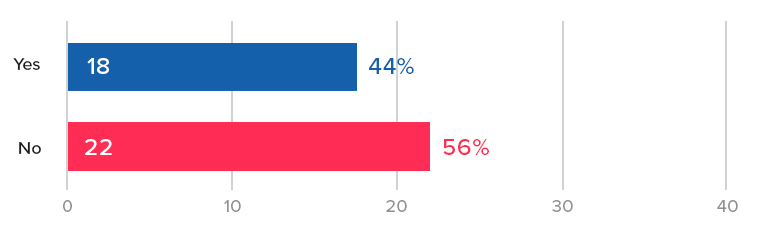

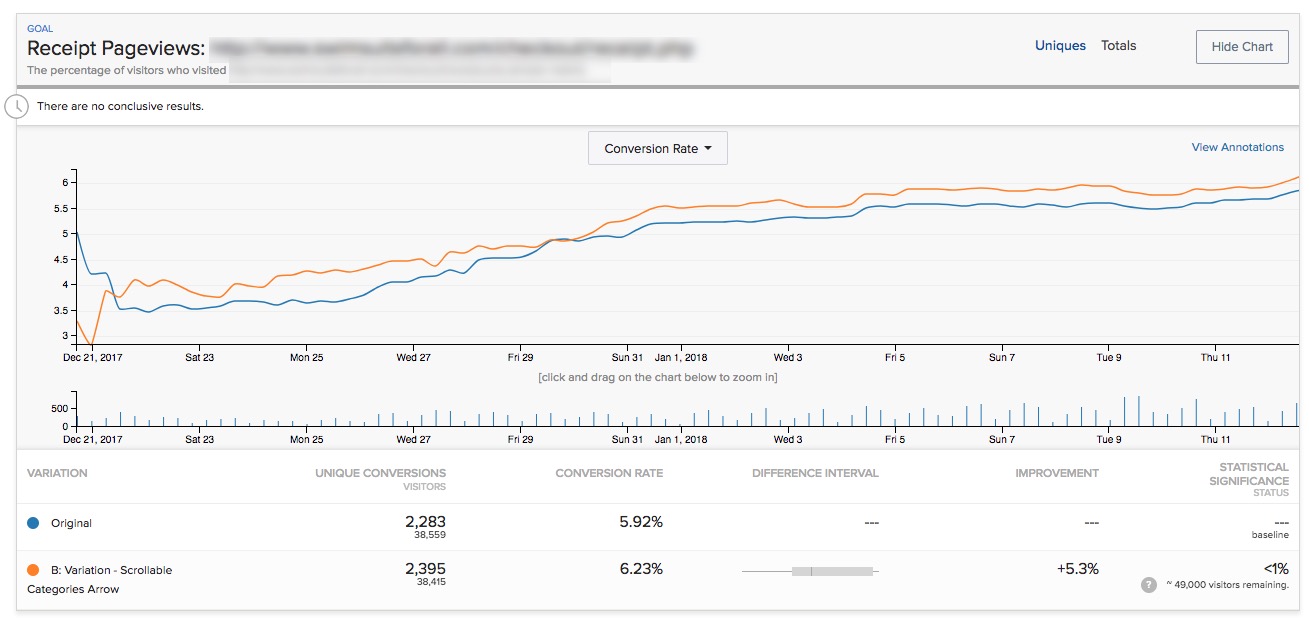

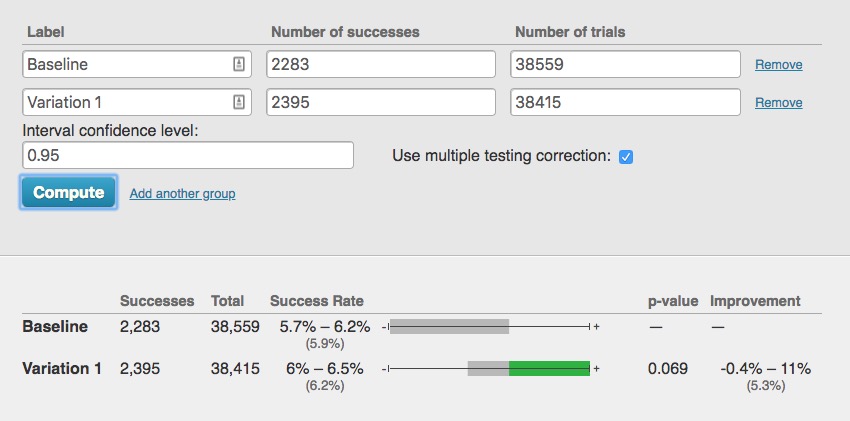

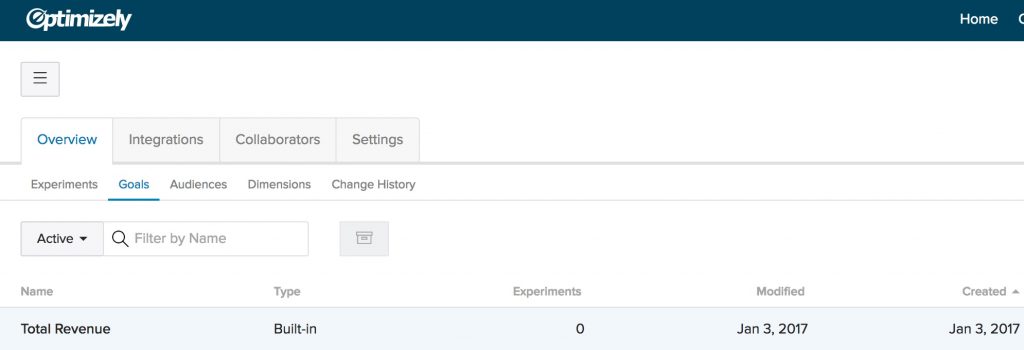

First, completed orders. After 28 days, we saw a 5% increase with 93% statistical significance:

Note Optimizely’s stat engine uses a more rigorous “two-tailed” statistical significance calculation, which does not give this any significance, but a traditional p-value calculation shows this:

So this is not a “runaway winner” by any means. The industry convention is to declare a winner if it reaches 95% statistical significance or higher when the test reaches your pre-determined number of visitors.

But that is, in the end, a “convention”.

With over 80,000 visitors, 2,300 conversion events per variation, and having run for exactly 4 weeks with the variation leading basically for the entire test, we felt the conclusion was “this is likely a winner and is more likely to perform better by 2% – 5% over longer periods.”

But that’s just one metric (albeit an important one). The story gets more interesting if you look at additional metrics.

Only the exposed listing pages showed an increase in pageviews

Pageviews of the category pages showed clear increases by 10% – 12% (with 99%+ significance), validating one of our critical hypotheses that the Link Bar would send more users “down funnel”.

For example here is the first category link on the left of the quick Link Bar we added (e.g. the “TOPS” link in the “B” mockup above):

The other two category pages showed similar results.

But those pageview increases were only seen for the exposed category links:

What about the links that were “hidden behind the scroll” in other words, you needed to use either the arrow, or scroll to the right to reveal them?

They showed no change in pageviews:

This was consistent for all the category page links that were hidden behind the scroll.

This confirms the original hypothesis of this test: Revealing links to product and category pages will increase the amount of customers reaching them.

Certainly if category pages that were just to the right in our Link Bar didn’t see an increase in pageviews, then hiding all links behind the hamburger menu does the site no favors in terms of getting shoppers to the products.

Takeaways for your mobile site:

- Test putting links to your most popular product categories at the top of your mobile homepage.

- Try making the bar scrollable and see if you can reproduce this result in your store.

- Do you see indications of an increase in completed orders like we did? Maybe your store shows a far more definitive increase in conversion rate than the slight possible lift we saw above.

Case Study 2: Health food brand sees 29% increase with a navigate Link Bar on the homepage

Next we have a very different ecommerce brand, in the health food space with 3 product flavors.

Again, the homepage had copy and images and links but you had to scroll down the page to get links to the 3 PDPs.

So we added the navigation Link Bar just like before:

The variation in this case had links directly to the PDPs of the 3 different flavors (which each had their own PDPs).

After 14 days, we saw a 29% increase in orders with 98% significance.

Traffic to this site was lower, however, so the test got only 139 vs. 107 conversions per variation. This is low. The difference is only about 30 orders, so again we have to put a qualifier that the variation “likely” performed better.

However there was no indication that it would perform worse than not having the links.

Link Bars can help expose customers to new products

In this case, of the 3 flavors, the second and third flavor saw a large increase in PDP pageviews (Chocolate and Strawberry in the mockup above): +25% more visits, and 77% with 99.9% stat significance and over 600 conversions per variation.

But, the most popular flavor did not see much of an increase.

Why?

In this case the site was known for their most popular flavor. Historically that was the only flavor for when the brand first launched. Referral links disproportionately went there, blog links disproportionately link to that flavor, and the homepage imagery and copy mostly talked about that flavor

So in this case the Link Bar served to expose more customers to the rest of the company’s offerings.

This is a nice additional benefit of Link Bars. Note that those alternative flavors were inside the hamburger menu also, but as we saw in the first case study, having them exposed on the page (via the Link Bar) showed a definitive increase in visitors to those PDPs.

Conclusions and how to apply this to your own mobile ecommerce site

Taken together both of these tests, on two very different ecommerce stores (1000 products vs. 3 products), suggest a similar theme:

Make it as easy as possible for mobile shoppers to get to your product offerings.

If you have hundreds or thousands of products, put links as close to above the fold as possible to your most popular categories.

In the first example above, a natural iteration of the test (that has not yet been tested) would be to stack the links instead of having them be in one scrollable row.

This will give shoppers an even better overview of exactly what the store offers.

This should send even more visitors “down funnel” and perhaps give the test a more definitive win over the baseline.

If you have only a few products, create top nav links to the product detail pages.

Finally, as always, you should test this yourself. Don’t assume these results will apply to your store.

Both of the AB tests above saw definitive increases in visits to the category or product detail pages, but the increases in order rate weren’t “runaway” winners, which we define as 99%+ significance with hundreds or thousands of conversion events for each variation.

That’s okay though, as we’ve written about before, not all ecommerce stores have the luxury of that much data. That doesn’t mean you throw up your hands and not test anything nor does it mean you should just use the old fashioned method of “debate designs in a room, loudest voice wins, and implement it outright”.

That’s even more dangerous.

Aside: We once had an in house designer form a client ask if they could implement a hamburger menu on desktop because it “looked sleek”. (Facepalm)

This is why testing is important, even if you don’t get picture perfect increases in conversion rate (99% significance, and thousands of conversions over many weeks).

What about desktop? Why is this mobile only?

The reason this isn’t relevant on desktop is because almost all ecommerce sites have exposed links to all categories (and often dropdowns to subcategories, aka a “mega menu”). So this is by definition almost always already implemented on desktop.

It’s just that the space constraints of mobile result in the hamburger menu.

Hopefully this article and this data we shared helps you start to think outside of needing to collapse everything behind the hamburger menu and starts opening up other possibilities.

On that note, our variations aren’t the only way to go about this. Here are several more examples of alternatives to the hamburger menu from different ecommerce mobile sites.

Ecommerce mobile homepage examples

Who is doing this well already?

Here are some other brands that have clear links at the top of the mobile homepage, getting rid of the complete dependence on the hamburger menu:

Gap.com

Gap has a lot of products and categories. They have clear links to the main categories at the top of their mobile homepage:

The use of photos is a nice touch and could possibly increase engagement with the links and clarity for certain stores.

Note the links are not sticky upon scroll, whereas the links to Gap Incs other brands at the top of the page are. Interesting.

Abercrombie

Also in the apparel world, Abercrombie chooses to simply split by Men and Women. This is worth testing versus a deeper category split like Gaps above:

Lowe’s

For search heavy stores, Lowes.com has a great example of both featuring search and using a suggested area to basically push some category links. We hadn’t been to Lowes.com on this device before so these were likely just categories they wanted to promote (versus a personalized list based on past visits).

Finally, here’s a more bold homepage concept by Cos Clothing, who doesn’t need a thin strip of suggested categories but rather just dedicates the bulk of the homepage to sending shoppers to the right categories.

(Note by the time we published this article the Cosclothing homepage had changed to include a promotion at the top instead of full bleed photos linking to women and men’s departments.)

They have full bleed images for women and men followed by clear links.

We would love to test something this bold with one of our clients.

Final Aside: the homepage is often sacred ground for ecommerce organizations. People fight and negotiate over screen real estate there. So even we, as a third party optimization agency, often have severe restrictions on testing the homepage, much less radically redesigning it. Much thanks to the two clients who let us run the tests featured above.

Want to work with us to improve your mobile conversion rate? Learn more about working with us here, or join our email list to get new articles like this emailed to you when we release them here.

Two ecommerce upsell AB tests that were worth $2 million+ in annual revenue

Not presenting related items at the shopping cart step could be costing many ecommerce stores millions in potential revenue.

In particular I’ve noticed while large, well known brands do this consistently (see examples below), mid-size ecommerce stores often don’t, and that’s likely a mistake.

In this article, we’ll show data from two AB tests where we added a one-click upsells and cross sells.

The first increased average order value (AOV) by $55 (worth millions in annual revenue).

The second increased conversion rate by 13%, which for any 8 figure or greater ecommerce store is also worth 7 figures in extra annual revenue.

Finally, we’ll also show (and analyze) 5 live examples from well known brands of upselling and cross selling related products at the cart stage.

This way we hope you can find an upsell implementation that works for you.

Note: We are a conversion optimization agency exclusively focused on ecommerce. Want our conversion and UX experts to evaluate your upsells or optimize your conversion rate? Learn more about what we do on the homepage or contact us via the red button at the top.

How do upsells and cross selling work in ecommerce?

Some people have all sorts of specific definitions of “upsell”, “cross sale”, and “downsell”.

Quickly, for our purposes, I prefer to use the more general definitions of upselling and cross-selling, which just mean you’re trying to get the customer to increase their order value by presenting additional items they might want.

It may be a more expensive item (upsell). or some add on items (cross sell). But here’s the most common type in ecommerce (discussed in more detail below):

Once you add to cart, Gap is showing 4 additional items I can consider. We’ll discuss the implementation details below (for example here you need to click into each product detail page (PDP), you can’t just add those items to cart) but that’s the idea.

For now, let’s talk strategy.

As the two case studies in this article below show, upsells and cross sells can either:

- Increase AOV

- Increase conversion rate

(If you’re curious how an upsell can increase conversion rate scroll down the second example.)

Let’s start with an AB test that does the former.

Upsells that increase AOV: $2 million/year extra revenue for an online furniture store

Our first example is from an online furniture store. Let’s say in this case that they sell sofas ranging from $850 to $2000+ with an AOV of $1200.

[su_note note_color=”#e7e7e7″]Note: They don’t actually sell sofas, but it’s analogous to sofas. You can learn how and why we anonymize brand identities here. All data we report is 100% real. [/su_note]

Their most popular sofas are leather, and what’s interesting in this case study is not the sale of the leather sofas, but of a particular upsell: a leather conditioning kit that helps protect the sofa, and costs between $40 – $80.

Something like this:

The conditioning kit is a perfect cross sell for a customer buying a leather sofa. It actively protects and lengthens the life of the thousand dollar or more purchase the customer is already making.

If you’re already spending $1500 on a leather sofa, why not pay $60 to protect it and make it last longer.

But these complimentary accessories were not easy to navigate to on the site at the time of this test. They weren’t promoted heavily.

So we hypothesized that mentioning it as an option at the cart step, and making it very easy to add to cart, would increase AOV.

Building our AB test from the hypothesis

You can turn a hypothesis into an actual UI/UX treatment in many different ways and this step is critical. Our hypothesis was:

Offering a leather conditioning kit as a one click upsell when a customer adds a sofa to cart will increase AOV and thus total revenue.

But how should we actually offer the leather conditioner in the cart?

With a photo?

As a one line item?

Do we add some copy to really “sell” it or keep it low key?

Will any of these decisions possibly hurt sofa conversions itself?

We opted to start low key because we felt that the change of going from not mentioning that leather conditioner at all to mentioning it was a big enough change.

Our variation design:

The pink strip is what we added.

We coded the plus icon to add the conditioning kit to cart on click. If the customer clicked the name of the conditioning kit instead, it took them to its product detail page (PDP).

Results

Typically we run tests for around 2 – 4 weeks, but we ran this test for 41 days (nearly 6 weeks)! Why so long?

Because what we were looking for here was change in AOV, but, the current AOV was above $1000, and the leather conditioning kit costs between $42 and $84.

So we were trying to detect a pretty small change.

After 41 days, over 4000 transactions and $5,600,000 revenue tracked, and AOV increased by $55, with 92% statistical significance.

The AOV increase held steady for the last 4 weeks of the test with statistical significance sitting in the 90% – 95% range the entire time.

Here is a plot of quantity sold per week of the upsell’s product SKU in Google Analytics’ ecommerce report:

Previously they were selling around 40 – 80 conditioning kits per week. Once we turned on the test (which means only 50% of users saw the variation), sales jumped immediately to 150 – 180 per week.

In fact, the warehouse ran out of leather conditioning kits when we turned this test to 100% of traffic and we had to turn it off temporarily until they could order more.

This increase in AOV, on average, was worth an extra $180,000 per month in revenue (that’s over $2,000,000 of extra revenue per year!).

Takeaways for your site

Ask yourself: Are there complimentary, lower priced products that pair with your main product(s) really well?

Walk through the typical buying and checking out funnel.

- Is it obvious to customers that these products exist? It should be.

- Is it easy for them to add them to cart? It should be.

- Does the copy position them in a way that makes it clear they compliment the primary products? It should.