Optimizely vs Adobe Target vs VWO (Visual Website Optimizer) is a decision we’ve helped many clients work through. We’ve run hundreds of AB tests for ecommerce clients using all three platforms (we estimate we run 200+ AB tests annually), so in this article, we’ll compare the pros and cons of each and give our hands-on industry experience and discuss what differences we’ve seen from practical use, and where we don’t see much of a difference.

We hope this article will provide a nice contrast to the surface level comparisons from software comparison sites and from the marketing documents from both Optimizely, VWO, and Adobe.

In addition to differences between the platforms, we also share strategy nuggets in this article, such as:

- Why your AB tests should measure more than just one or two conversion goals

- When to use click goals versus pageview goals

- How we setup preview links so we can easily do QA (quality assurance) on multi-page tests

Who we are: We’re an ecommerce conversion rate optimization (CRO) agency. We design and run AB tests in multiple platforms every day. You can learn more about how we can help your ecommerce brand increase conversion rates, or join our email list to get new articles like this one emailed to you.

Finally, we got lots of help on this article from Brillmark, an AB test development agency we partner with and highly recommend.

[su_box title=”Contents” box_color=”#1079ce” class=”table-of-contents”]

- Setting Up Goals

- Preview Links

- Results Interface

- Statistical Model

- Integration with Analytics

- VWO’s CRO Package

- Developing Tests

- Other Features

- Pricing and Which to Choose

[/su_box]

How We Came Up With This Review

This list is from our experience actually developing and running conversion optimization programs for ecommerce clients using all three a/b testing tools.

This article was not influenced in any way by any of the software platforms we discuss, and we have no affiliate relationship with any of the platforms.

We simply run ecommerce ab tests all day, every day, via our agency. Below, we first discuss the main functional differences in using the software, then we discuss what we know about pricing for each platform at the end and give some recommendations of situations where you may want to go with each of the platforms.

Setting up Goals in Optimizely vs Adobe Target vs VWO

We’ve emphasized this in its own article before and mention this to every client we work with: setting up proper goals is essential to every AB test.

To recap briefly here:

- It’s not enough to measure just one goal in an AB test (for example, revenue) — you could miss the detail of why that goal increased, decreased, or stayed the same.

- Don’t just measure the immediate next step only declare winners and losers on just that goal — for example, measuring the success of an AB test on the ecommerce product detail page with only “Add to cart clicks” as your only goal. We’ve seen add to cart clicks differ from completed checkouts more times than we can count.

- Be careful about tests where one goal increases (even with statistical significance) but related goals don’t agree with that result. Try to have an explanation for that or flag that test as one to retest later (e.g. transactions increases, but views on checkout, cart, and add to cart clicks are flat, and the test was on the category or listing page. Why would that be?)

So the solution to these problems is two fold:

- Have a Standard Goal Set that lets you measure key steps in the entire conversion funnel. For example in ecommerce, have a standard set of goals for transactions, revenue, views of key pages in the checkout flow, cart page views, and add to cart clicks.

- Define Unique Goals for Specific AB Tests: For tests that add, remove, or edit certain elements, you can add specific goals that measure usage and engagement of those elements. For example, if you add something in the image carousel of a product page, you can add a unique click goal inside that carousel (in the alt images for example) to see if your treatment affected how many people use the carousel. This is in addition to your standard goal set, not in replacement of it.

So, in order to do this, it should be easy to setup and re-use goals within your a/b testing solution.

Two Most Common Types of Conversion Goals: Pageview vs. Click Goals

Finally, there are largely two types of goals that we find most useful in AB testing:

- Pageview Goals

- Click Goals

Pageview Goals

You typically use pageview goals for the most critical metrics. (a) checkouts or final conversions (via the receipt, success, or thank you page) and (b) preceding pages (the checkout flow).

Click Goals

Clicks goals are most useful for measuring user engagement on certain items, and as substitutes when you can’t use pageview goals (for example submitting a form that doesn’t redirect to a thank you page. You can also use a form submission event goal for this.). In terms of the “engagement” use case, we find this most useful when you are testing the effect of a certain element on conversion rate.

Using a common ecommerce example, say you are testing the impact of a particular widget or feature on a product page.

For examples using Boots.com’s mobile cart as an example, say you are testing the impact of a “save for later” link:

What goals should you include in this test?

First, you want the usual ecommerce checkout flow goals: These are a series of pageview goals through the checkout flow, like shipping, checkout, conversions (completed orders or transactions) and revenue.

Those goals will teach you if including this link negatively impacts checkouts and thus immediate conversion rate.

But they don’t tell you the complete customer experience story: you also want to know if people actually use that link. So you’re going to want one click goal for the link itself and perhaps pageview goals for any page you get to after you click that link (company dependent).

Thus both pageview goals and click goals are useful.

(Note: This example is ecommerce, because that’s what we work on exclusively now, but it applies to other website types as well. For example when we did SaaS AB testing, we’d similarly want to measure clicks on pricing option boxes and other elements we added to marketing pages.)

Which Platform is it Easier to Create Conversion Goals In?

In general, we find Optimizely and VWO much easier than Adobe Target for setting up conversion goals. We found some pros and cons when comparing Optimizely vs VWO that may help you pinpoint the platform right for you.

In Optimizely and VWO you can create goals at the platform level and have them be re-usable across multiple experiments.

In Optimizely, you can go to Implementation > Events and manage your list of events and create new ones.

In particular, look how easy it is to create a click goal (We’ll contrast this with doing it in Adobe Target later):

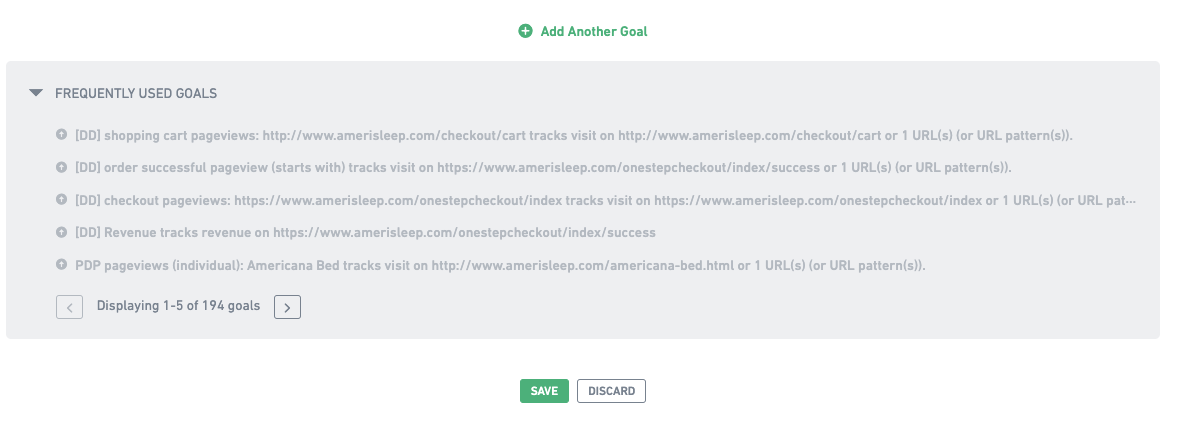

The equivalent in VWO is their frequently used goals section in the setup area of every AB test:

Unlike in Optimizely, there isn’t a central “AB test goal management” section inside the AB testing product of VWO. You just can see your most frequently used goals in a list as per the above screenshot when creating goals for any test you’re building. Perhaps this is slightly more cumbersome than Optimizely’s central events area, but we find this more or less equivalent.

[Aside: VWO does have a “Goals” section in their CRO product, more on that below. None of our clients have their CRO product, just AB testing, so we don’t have direct experience with it, but it seems this is more for tracking certain conversion metrics overtime site wide rather than a database of goals to use in AB tests.]

Also, just like in Optimizely, adding click goals is just as easy as pageview goals:

In contrast, in Adobe Target, you can create click goals, but there are some restrictions. Namely, you can only do this in tests you create using the visual editor method, it doesn’t work for experiments created using the form method.

To actually add the goal, you have to get into the Target visual editor and click on the element you want. When you do that, Target will let you select which CSS selector you want to target for the click (e.g. class or ID). The restriction is that this can’t be updated during the test. So in certain situations, like if the element ID or class changes dynamically for every new session and the selector selected by adobe visual editor is static, and you can’t modify it manually.

Pro Tip: Create a Base Experiment Template

Regardless of which platform you’re developing in, we find it convenient to develop a base experiment template that has key javascript and CSS you use and goals and audience settings you often use.

This gives a standardization to your AB tests that’s useful. Our strategists find it convenient to see goals in the same order, with the same set in every experiment. It makes results interpretation easier. Meanwhile our developers have a set template for writing their test code. This also allows developers to switch off, where one can finish a test that another started, help each other out, etc.

Preview Links in VWO vs Optimizely vs Adobe Target

Preview links are important to do QA (quality assurance) on AB test variations.

Quickly, unlike traditional development where you develop in a staging or development server, QA there, then push to production, in AB test development you develop inside the AB test platform, and QA the variations on the production server directly using preview links. Preview links are unique links that let you see a page as the variation, but you need the link to see the variation, no real users on the live production site will ever see it.

All 3 platforms (and basically every AB testing platform) has preview links, but there are some subtle differences in how they convenient they are to use.

First is how easy is it to see if goals are firing and measuring properly?

In Optimizely, there’s a preview widget that let’s see you see details about the experiments running, see a live feed of events Optimizely is picking up on, and more.

It’s pretty convenient for QA-ing a split test. For example you can click on elements and see in the events feed if Optimizely is picking that up as a click goal. So you can see, before you launch, if all your goals are measuring properly. For many tools, it requires checking logs and consoles, but this feature lets any non-technical employee do the same.

The other useful thing we use the preview snippet for is to see pages in certain audience and experiment conditions.

For example, note this tab above is called “Override”. What that means is you can enter audiences and experiments that you want to turn on and off for the purposes of viewing the page. This is useful for us since we’re always running multiple tests on a clients’ site at one time. So if a test is running on the PDP for example and we’re QA-ing a new test in the pipeline also on the PDP, we can preview it with the current test turned off. This is useful and speeds up the AB test development process (otherwise you’d have to wait until the first test concluded to QA and finish developing the new test).

VWO has a similar preview widget, but its features are more limited. It just let’s you choose the variation of the experiment you are previewing that you want to see, and shows you some log data to make sure things loaded properly:

It doesn’t show you click and event firings as you click around the site and load different pages.

Preview Widgets Help You Easily Perform QA on Multi-page Tests

One other convenient thing about the preview widget for both Optimizely and VWO is that it lets you browse around the entire site and “stay” in the variation.

This is really useful for multi-page experiments, which are common for most companies doing a/b testing.

Say you have an AB test running on all PDPs. If you get one preview link for one PDP, and you open it, you can see how the variation will look on that one page, but what about on other pages? Maybe other PDPs have longer product names, more photos, or different elements that could “break” the variation. It’s useful to test the variation code on multiple PDPs to check for this.

Normally with just one preview link, if you click off of that page, you don’t remain the variation, you just enter the normal live site.

But with the preview widget above in Optimizely and VWO, you can stay in the variation regardless of where you go on the site. So you can check as many PDPs in this example as you like and see how the variation looks on all of them. This helps speed up QA a lot.

Adobe Target does not have a preview widget like this. You simply get a preview link, and that’s it. This is a bit inconvenient, but not unmanageably so. What our developers do is add a javascript “QA cookie” into test code when they develop the test. This is a cookie that keeps you in the variation once you open the preview link. We set the cookie to expire in 1 hour. So then once someone opens a single preview link, they are “in” the variation for an hour, and can browse around the site and see every page as a user in the variation would.

We actually use this QA cookie method for all split testing platforms, including Optimizely and VWO, because although the preview widgets in Optimizely and VWO are useful, it requires you logging into the Optimizely or VWO account and clicking preview to get to it. So when a developer finishes coding a test and passes it to QA, it’s actually really convenient for the QA team, the designers, and the clients, who aren’t always logged into the right account, to just get a single link that keeps them in the variation.

Results Page User Interface

In our view, the results page UI is one of the biggest differences between Optimizely vs VWO.

In Optimizely there is a single long page of results and graphs for all goals, which is easy to scroll through:

…and there’s a nice summary of the results themselves at the top that you can horizontally scroll through:

In our opinion VWO’s results page UI is a little clunkier.

We use the “Goals” report almost exclusively, more on the difference between this and the “Variations” report later.

You have a list of goals on the right and you have to click into them one by one to see the results.

When you click on each, you get a bar graph with numerical results underneath.

You can click the Date Range Graph to see the cumulative conversions. It doesn’t have an option to see the conversion rate by date like the Optimizely graphs do.

This is largely serviceable and fine. When deciding between Optimizely vs VWO, we recommend VWO to most companies getting started with AB testing because these minor inconveniences are well worth the cost savings vs. Optimizely in our opinion for most companies starting with AB testing. But if you’re a power user, this is something to keep in mind.

Adobe Target has, in our opinion, the clunkiest results page.

First, it opens with only one goal’s results displayed:

Note above it has only one goal under “Shown Metrics”. So you have to manually select the other goals to include in your report.

Then, the second annoyance is that once you do select all your goals to be displayed, there is a clunky accordion UI where you have to manually “open” and “close” one goal after another to see the results of all of them. By default, all but your first goal show just the high level results without percentage increase or statistical significance, which isn’t that useful.

Here it is with all accordions closed:

Here it is with one goal’s results open:

Again, these aren’t major annoyances.

As many have written before (most notably, Evan Miller’s “How Not to Run an AB Test”) you shouldn’t be checking AB test results all the time anyways.

Nonetheless if you start to really integrate testing into your company’s CRO processes, you will start to use these features often. So in this article we wanted to be transparent with all pros and cons that we’ve encountered, even if they are small annoyances, and simply tell you later what we think is a big deal and what is not.

Statistical Model Used in Reporting Results

We’ll be brief here as a lot has been written about statistical models for interpreting AB test results, and we’ve found that ecommerce teams start falling asleep when we talk about AB test statistics too much.

In terms of platforms, both VWO and Adobe Target use more “standard” calculations of statistical significance (stat sig = 1 – p-value). VWO uses a z-score based calculation for it’s “Chance to Beat” number. Adobe Target uses a traditional confidence level in its results reporting.

Optimizely, however, is unique in that it uses a statistical model it developed with professors at Stanford that it calls it’s “stats engine”. This is a somewhat recent feature that rolled out at a similar time as Optimizely X (which is what they call their testing tool now). The math behind this is complex, but the end result for you, the user, is that Optimizely claims that with it’s statistics calculation, you can “peek” at the result whenever you want, and the moment it hits your significance threshold (say 95%), you can stop the test.

That’s actually really convenient, but in our experience we have seen multiple instances where Optimizely has reported 90%+ statistical significance, but after collecting more data, it goes back down.

Integration with Analytics

As you start to do AB tests, a proper integration with your regular analytics tools becomes borderline essential. In our experience, there are two main reasons why:

- Other Metrics – You’ll want to know how the variations in the experiment differed in metrics that you did not think to create goals for beforehand (e.g. time on site, visits to some other page on the site you didn’t think of, etc.)

- Consistency with Analytics – You’ll want to validate the ab test platform’s results with your own analytics platform

(1) Other Metrics

No matter how careful you are in measuring plenty of goals in your AB tests, inevitably, you’ll have tests where everyone will want to know how the variations contrasted in metrics you didn’t measure as goals in the ab testing platform.

Often, ambiguous results (a couple metrics increasing for the better, others not) will lead the team to want to know auxiliary information (e.g. “Well, how did this affect email capture?” or “Did more of them end up visiting [this other page]?”). Or you simply may want to know about other metrics related to user experience that you didn’t think to include as goals when launching the test (“Did the bounce rate decrease?”, “How was time on site affected?”)

Integrating your AB testing software with other metrics will let you answer these questions when they arise because you can create segments in analytics (Google Analytics, for example) for the variations of your AB test and see how they differ in any metric that the analytics platform measures.

(2) Consistency with Analytics

A common issue in AB testing is not seeing the lifts from AB tests manifest themselves in analytics once the winning variant is implemented on the site. This problem is almost always due to other factors affecting conversion rate, not the AB test being “invalid”.

For example, natural fluctuations in site conversion rate can be massive (due to day of the week, different promotions, ad traffic changes, etc.), and an improvement in conversion rate of 10% from the PDP to checkout will easily get buried in those fluctuations. That doesn’t mean it’s not important. For an 8 figure brand, that 10% increase is worth a lot annually. It just means AB testing is the way to measure that, and expecting to see a noticeable bump from implementing the winner when your normal fluctuations are huge is not reasonable.

All three testing solutions have ways to integrate with analytics, but for companies already on Adobe Analytics, obviously with Target, you get the potential perk of both software platforms being part of the same software company. Note, however, in our experience, that advantage is minimal. Why? Because if you can push AB test data from any platform into your analytics software, it essentially doesn’t matter if the AB testing software and analytics software are made by the same company.

Here are links to integrating with Analytics:

- VWO to Google Analytics

- VWO to Adobe Analytics

- Optimizely to Google Analytics

- Optimizely to Adobe Analytics

Perks of VWO’s “CRO Package”

One unique feature of VWO that we think is pretty useful is what they call their “CRO” package. Just to be clear, you can buy just AB testing for them, or buy this CRO package in addition. You can learn all the details on their CRO page, but we want to highlight just a few features we think are worth considering here.

In short, their built in user research tools, which they group under “Analyze”, are pretty useful when offered in a single platform that also does AB testing.

Here’s why: Yes, you can get heatmaps, recordings, forms and surveys in many other tools. But, having them integrated with your AB testing tool lets you get heatmaps or recordings, for example, on a variation by variation basis.

This means you can see differences in user behavior between original and variation of an AB test.

The need for this comes up in our normal work with clients all the time. You set up and run a test, and just like we explained in the analytics integration section, you realize after the results come in that you’re curious about user behavior that can’t be measured by only the goals you thought to create: (“Did they actually use this feature?” “Did they scroll a lot to see more images?” etc.) Other times you may want to see if you missed something at the QA step and want to see recordings to make sure customers did not encounter an error in a variation you designed.

By using a third party analysis tool like Hotjar or Full Story, it is possible to integrate to the AB testing tool, but it’s more tedious and in our experience sometimes doesn’t work well. With VWO, you can easily get heatmaps and recordings of different variations.

Actual AB Test Development

Our developers don’t feel like there’s much of a difference in developing in either platform. In all cases, developers typically develop a variation locally, then upload the code into the platform and test via preview links. All the platforms seem to handle this just fine.

Other Features

The above sections were the things that matter to us the most. Here we’re going to rapidly go over other features you may want to consider:

- Multivariate testing – Advanced CRO teams may want to run multivariate tests (mvt) which is possible in all three platforms

- Server Side testing – Optimizely has a robust “Full Stack” product offering that lets you run server side AB tests and AB tests on iOS and Android. VWO also lets you run tests on mobile apps. Adobe Target also lets you run server side tests.

- Personalization – All 3 platforms advertise having personalization solutions, although it seems Target and Optimizely have the most robust offerings here, especially at the enterprise level.

Pricing and Which to Choose

It’s hard to discuss pricing definitively because all 3 optimization platforms (like many software platforms) change their pricing often, pricing is a function of your traffic levels, pricing is a function of the features you buy, and pricing is also a function of other licenses you may already have (e.g. Adobe Experience Cloud). So, sadly, it’s not as simple as us listing out prices here.

But in general, we’ve found that VWO (here are is their pricing plan page) is the most affordable testing solution by a long shot, followed by Target, then Optimizely.

For more than 2 million visitors per year to test with, expect to pay at least $1000 per month, with plans getting much higher than that quickly.

For clients that are just starting AB testing, we almost always suggest VWO as it’s the most affordable and we think the majority of your success in a CRO program has to do the with the strategy you implement and knowing what to test, not the software you choose.

That said, if you think it’s likely you’ll things like enterprise level personalization, or personalization across multiple device types, and Optimizely’s unique features are important to you, it would also be a good option to consider.

Finally, if you already are on Adobe Marketing Cloud and want to make sure you stay integrated, Target may be a good option to consider.

Not sure? Feel free to reach out to us and ask us what you’re considering and we can give our honest opinion.

And if you are an ecommerce brand looking to implement a strong, results driven CRO program with a team that has lots of experience doing this, you can learn more about working with us here.

[Note: Not evaluated in this article are other ab testing solutions such as: Google Optimize, Monetate, AB Tasty, and more. We may write subsequent articles contrasting these platforms.]

2 Comments

Hari Prasad

August 27, 2019Thanks Devesh. It is super-informative. Suprisingly, yours is one of the few articles which touches this topic.

Can vouch for the last sentence:

“your success in a CRO program has to do ….. not the software you choose.”

There is a lot of noise around the tools, when the right discussion has to be around the experiments.

Becmen

October 5, 2019This article is very helpful; it covers every part needed to understand differences between the platforms before investing. Although the technicality is quite overwhelming but kudos on all the attention to detail.