In our work with dozens of ecommerce companies over the last 5 years, we’ve noticed that the design and UX of the add to cart section (buy buttons, options, quantity controls) is debated a lot.

- Should you make the add to cart button sticky?

- Should we make the entire section sticky?

- Should we hide some selections (size, color, flavor) or show all?

- Should we show all sizes as buttons or shove them in a dropdown?

- In what order should we present the different options?

This case study is about the first question.

In this article, we present results from a couple AB tests where we tested having the entire add to cart area be sticky versus not sticky on desktop devices for an ecommerce site in the supplement space.

The variation with the sticky add to cart button, which stayed fixed on the right side of the page as the user scrolls showed 7.9% more completed orders with 99% statistical significance. For ecommerce brands doing $10,000,000 and more in revenue from their desktop product page traffic, a 7.9% increase in orders would be worth $790,000 per year in extra revenue.

But, not all instances of making add to cart controls sticky have increased conversion rate, as we share below.

In our experience, sticky add to cart areas on desktop product pages are less common than on mobile, so this result suggests many ecommerce brands, marketing teams, and store owners may benefit from AB testing sticky add to cart buttons or entire add to cart areas being sticky on their product detail pages.

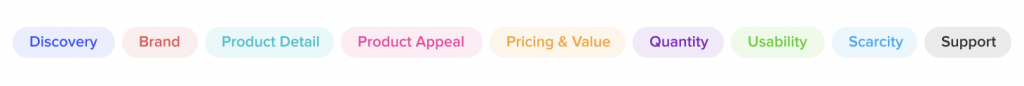

In general, this type of test is squarely in the “Usability” purpose of our Purpose Framework.

Usability tests are changes to UX, and typically what most people think of when they hear “AB testing”. But generally, they aren’t where you get consistent, long term increases in conversion rate, because most modern ecommerce websites already have good enough UX. Instead, we suggest: (1) tracking and plotting which purposes you’re testing more often and which are winning and (2) being more intentional about deciding which purposes are likely to move the needle for your customers and systematically focusing on them it.

We explain more in our Purpose Framework article linked to above for those that are curious. You can also see all ecommerce A/B tests we’ve ever done, organized by purpose, in our live database.

Note: If you’d like to run AB tests like this to help increase your ecommerce site’s conversion rate or improve user experience, you can learn about working with our ecommerce CRO agency here.

Building a Data Driven Culture: AB Tests Can Help Settle Endless Debates

The conference room: Where the sticky add to cart button is likely debated non-stop with little useful progress is made.

When you read about AB tests online, most articles have a nice predictable pattern: (a) We had a great hypothesis. (b) we tested it (c) it worked and got a conversion lift!

But when you do enough AB testing (we run hundreds of AB tests on ecommerce sites every year, which we transparently catalog here), you come to learn that most tests don’t end up so neat and clean.

So instead, we urge you to think of an alternative, but also valuable use case of AB testing:

Run a test to learn about your customers even when you could make arguments for either variation being “better” and there isn’t consensus on which one is “obviously going to win” (a phrase we often hear clients use).

Let’s use this article’s example to learn why this “ab tests for learning” use case is so useful: For this sticky add to cart button example, in the traditional design process, people in the company would debate in a conference room (or by email, or in Slack), about having a sticky vs. non-sticky add to cart area on their product page.

They’d go in circles fighting with each other about which is better, which is more on brand, which competitors do something similar, and on and on.

Then, either the higher ranking person wins or an executive steps in and gives their official decision like Caesar at the Colosseum (even though 99% of the time the executive is not a UX expert).

But in reality, neither side knows which will do better. Both arguments are legitimate. And the one important contingent that does not have a seat at the table is the customer. An AB test lets your customers give their input.

And, finally, whichever variation “wins” is important but not as important as the learnings about how your customer thinks, what they prefer, and what is more persuasive to them, all of which you could learn by simply running a test.

So, with all this on our minds, we ran this test.

The Variations: Fixed vs. Sticky Add to Cart Buttons

The variations were simple. One of them (A), the buy box was not sticky and in the other (B) it was sticky. (Aside: Read why we anonymize clients here.)

In this case, the client’s product page had a sticky buy box (variation B) to begin with, and it hadn’t yet been tested. The reason we decided to test this was because there was a content-focused culture around the brand, so we felt it was important to learn how much users want to be left alone to read content versus having a more in your face request to buy following them down the page.

One can make a theoretical argument for both variations:

- Argument for variation A (not sticky): You don’t want the site to act like a pushy salesperson, hovering over your shoulder when you’re just trying to read about the product and its benefits. It will turn people off.

- Argument for variation B (sticky): People can read about the product just fine, and reminding them of the ability to add to cart will increase the percentage of people that do so.

This is why, by the way, we use The Question Mentality and base A/B tests on a series of questions instead of old fashioned hypotheses where someone pretends they know what the outcome will be “Sticky is better! Trust me!”.

In this test there are really just two main questions to answer:

- Does making the add to cart area sticky affect add to cart rates?

- Does it affect conversion rate too? In other words if people add to cart more, do they actually check out more?

Results: Sticky Add to Cart Button Gets More Orders by 8%

In this test, the sticky add to cart variation showed 7.9% more orders from the product pages with 99% significance. The sticky version also showed an 8.6% increase in add to carts with 99%+ significance. The test ran for 14 days and recorded approximately 2,000 conversions (orders) per variation.

Referencing the arguments for either side above, this test gave the marketing department and us valuable information about the customer (and saved a potentially conversion hurting future change of undoing the sticky add to cart area).

Despite this brand’s heavy focus on content, despite the customers’ needs to read a lot about product uses, benefits, ingredients, and more, having an ever-present add to cart area seemed to be okay with the customer. It did not annoy the customers, and in fact seems to increase the percentage of them that decided purchase. This is a useful learning, despite this test largely being in the usability category, not desirability.

(Note: This is true of this store, it may not be true of yours. Hence we always suggest at the end of case studies that you should “consider testing” this. We don’t say you should just “do” this.)

This learning can actually be extended beyond the product pages to content pages such as blog posts where we can test being more aggressive with product links and placements to see if similar results can be achieved there.

This is why we love using AB testing for learning.

Update: Sticky Add to Cart Button on Mobile Product Pages Also Increased Conversion Rate

Since first writing this case study, we tested multiple UX treatments for a sticky add to cart button on the mobile PDP of this exact same supplement ecommerce store. The smaller screen real estate on mobile devices, of course, means finding CTAs like the add to cart button can be more difficult, so making the add to cart button sticky could improve user experience.

We tested three variations: (A) No sticky add to cart button (B) Sticky add to cart button that simply scrolls the user to the add to cart area on the product page (C) Sticky add to cart button that causes the add to cart area to slide up from the bottom of the page like a hidden drawer.

We observed a 5.2% increase in orders for variation C where the add to cart area slides up like a drawer, with 98% statistical significance. This test ran for 14 days and had over 3,000 conversion events (orders) per variation (so over 9,000 conversion events total).

Add to cart clicks increased by a whopping 11.8% on variation C (>99% statistical significance) and by 6% on variation B. So actual use of the button was substantially increased by making the add to cart button sticky on the mobile PDP.

Variation B — where clicking on the sticky add to cart button simply scrolls users to the add to cart area on the PDP — on the other hand showed no statistically significant difference in conversion rate from the original. As per an insightful reader comment below, this discrepancy between variation C showing a clear lift and variation B showing no difference could be explained by:

- The slide up “drawer” of add to cart functionality (choosing a flavor, quantity, etc.)in variation C may have kept users focused on that step because it feels like you’re in a new “sub-page” of sorts instead of just scrolling to another part of the PDP.

- Also that means on the PDP itself there was no space taken up by add to cart functionality in variation C like choosing flavors so users got to see more persuasive “content” about the product on the PDP.

This suggests that similar conversion gains can be realized on mobile product pages, but the details of how to implement them and the UI/UX that will cause a conversion increase are important. Questions? Let’s discuss in the comments.

If you have questions about whether your store should test sticky add to cart functionality, how to execute it (e.g. Shopify plugins vs. manual coding), or about working with us, you can ask us in the comments, send us an email, or learn about working with us.

Or join our email newsletter to get our latest articles and AB tests.

Our Foundational Ecommerce CRO Articles

- Our Purpose Framework

- Our Question Mentality

- Our live database of all A/B tests we’ve ever done

- Our collection of ecommerce UX breakdown videos

5 Comments

Maxime Biais

October 21, 2019Hello Devesh,

Thanks for this article, very useful. Really like the content of this website also.

Regarding the test you ran on mobile, what was the performance of version B at the end? Did version C perform better than B?

Would you have any advice regarding the behavior once you click on the sticky add to cart?

Thanks

Maxime

Devesh Khanal

November 2, 2019Maxime,

Great question. Both VB did not show a statistically significant lift over the original, only VC did. But the difference between VB and VC was more than just having a price next to the add to cart button in VC. In VB, clicking on the sticky add to cart button scrolled the user up to the add to cart area of the page. Whereas, in VC, clicking the add to cart button revealed add to cart details that slide up from the bottom of the mobile screen. We think this is the key to why VC won:

– The slide up “drawer” of add to cart functionality (choosing a flavor, quantity, etc.) may have kept users focused on that step because it feels like you’re in a new “sub-page” of sorts instead of just scrolling to another part of the PDP.

– Also that means on the PDP itself there was no space taken up by add to cart functionality like choosing flavors so users got to see more “content” about the product on the PDP.

This is a great question and I’ve updated the post to reflect this discussion.

Maxime Biais

January 15, 2020Really helpful Devesh, thanks a lot for your answer.

We actually want to test with format of version C (name, price and CTA).

The question we are asking ourselves also is when the sticky should appear on the pdp. We have 2 options:

– sticky shows up as soon as you land on the page => Does it make sense to present it before visitor sees color and size selection? Distracting or not for the visitor?

– sticky shows up when you scroll down and pass the current call to action => less intrusive but might miss some add to cart…

Guess the only way to find out is to test 😊

If you have an advice, happy to hear it!

Thanks

Mar

January 12, 2020Hi Devesh,

Thank you very much for this article. I am new on your site and getting familiarized with the work you do.

As a suggestion, I would consider changing the colour scheme that you use for selecting the best performance Versions in your graphics. Using red to select your top performer Versions is confusing as it leads readers to think black is best, red is worst; whereas in reality, when reading your blog post and comments, it’s really the other way around!

Maybe something to AB test 🙂

Thank you!

Devesh Khanal

January 15, 2020This is a good point. 🙂